Deal pipeline software for insurance industry

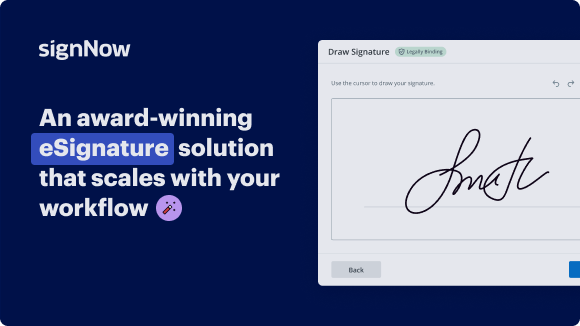

See airSlate SignNow eSignatures in action

Our user reviews speak for themselves

Why choose airSlate SignNow

-

Free 7-day trial. Choose the plan you need and try it risk-free.

-

Honest pricing for full-featured plans. airSlate SignNow offers subscription plans with no overages or hidden fees at renewal.

-

Enterprise-grade security. airSlate SignNow helps you comply with global security standards.

Deal pipeline software for insurance industry

Deal pipeline software for insurance industry

Experience the benefits of airSlate SignNow's deal pipeline software, designed specifically for the insurance industry. Save time, reduce errors, and enhance collaboration with our cost-effective solution.

Sign up for a free trial today and optimize your deal pipeline management with airSlate SignNow.

airSlate SignNow features that users love

Get legally-binding signatures now!

FAQs online signature

-

What does CRM stand for in Medicare?

The ultimate purpose of CRM is to increase profit, which can be achieved mainly by providing a better service to customers than competitors. CRM enables companies to gather and access information about customer orders, complaints, preferences, and participation in sales and marketing campaigns.

-

What does CRM stand for?

Customer relationship management Customer relationship management / Full name

-

What is the pipeline of insurance sales?

For insurance agencies, the stages of the sales pipeline include prospecting, lead generation, qualification, quote/proposal and closing.

-

What is insurance management software?

Insurance management software can help with billing and payment by providing online billing and payment portals. These portals allow policyholders to view their bills and make payments online, either through a web portal or a mobile app, without having to visit an office or mail in paper checks.

-

What is the objective of CRM in insurance sector?

CRM stands for Customer Relationship Management. It's an acronym you may see before words like “software,” “platform,” or “solution.” But a simple CRM definition doesn't explain the whole picture. Customer relationship management technology allows you to develop and nurture meaningful customer relationships.

-

What does CRM stand for in billing?

Healthcare CRM, also known as Healthcare Relationship Management, is a broadly used term for a Customer relationship management system, or CRM, used in healthcare.

-

What is pipeline management software?

Pipeline management helps the team monitor several sales performance metrics, from team performance to lead qualification. It gives you a reference point to identify who and which sales process stage is successful or holding your business back.

-

What is CRM for insurance?

An Insurance CRM, or Customer Relationship Management, is a software tool that insurance companies use to manage interactions with both current and potential customers. This system helps companies keep track of customer data, manage leads, and automate communication with customers.

Trusted e-signature solution — what our customers are saying

How to create outlook signature

hi and welcome to our session power the customer experience with real-time data pipelines my name is jared kleinfelter and i'm a solutions architect at aws i'm joined today by mike dain from home site insurance and really excited to talk about their innovative solution greetings mike thanks jared i'm mike dain director of cloud data architecture for home site insurance i'm really looking forward to sharing how my team has solved some of the most challenging problems of rapid rollout of new production ready real-time data platforms home site insurance founded in 1997 stakes its brand on delivering frictionless online insurance purchasing experiences and while the experiences are designed to be simple the data that underlies them is not it can be complex and changes frequently jared will walk us through some of the key industry challenges and aws technologies then i'll be back to dive into the details of the implementation jared thanks mike let's take a look at the agenda for this session first we'll look at common business opportunities across the insurance industry where having access to real-time data can be a key enabler then we will explore some common aws data pipeline solutions next mike will dive deep into the home site solution architecture share his lessons learned and give a preview of what's next as he and his team continuously look for ways to improve their solution finally we'll review some key takeaways the insurance industry is evolving across the insurance industry we see an acceleration of digital transformation driven by a desire of providers to service an increasingly diverse set of customer demographics while simultaneously needing to tailor those customer experiences to the individual through the use of technology customers are expecting an increasingly digital experience that meets them where they are they are expecting omni-channel communications across web mobile apps text live chat video conference or even traditional face-to-face they expect interactions to be focused on what they want when they want it insurance services are typically something customers only think about at the time of purchase and the time of claim so making the initial experience as frictionless as possible is key at the same time insurance providers are looking for ways to expand their addressable market as population demographics continue to change an aging population is often looking for more stable and traditional services however younger customers are looking for customized and dynamic ways to insure themselves and their assets this is why products such as usage-based insurance are increasing in popularity having the ability to quickly search analyze and select the right insurance options is more important than ever at the heart of this digital transformation is data building new ways to capture process and present information at the necessary speed is required we'll see how home site recognize these opportunities and build an innovative solution to address these needs in a bit but first let's look at few aws services to manage real-time data the ability to stream data is a primary component to a real-time architecture building and operating real-time data streaming solutions from the ground up can be challenging often platforms can be difficult to set up tricky to scale and achieve high availability and complicated to operationalize aws offers the kinesis family of services to remove undifferentiated heavy lifting and let customers focus on building solutions toward business outcomes there are of course sources and destinations for data source data might come from users on mobile and web applications or may be generated by logs or application telemetry destinations may be a data lake built on amazon s3 possibly a purpose-built database such as amazon dynamodb or put directly into a warehouse such as amazon redshift in between you'll find the kinesis family ingestion can be built into source applications via the kinesis producer library or aws sdk other aws services such as aws database migration service are also tightly integrated with the kinesis family kinesis data streams provides the ability to capture and store data in stream kinesis data streams is easy to set up with the ability to create new streams in seconds and manage elastic scalability while offering complex performance tuning options through partition key based shards kinesis data streams are a good choice when you require custom record processing with sub second processing latency kinesis data fire hose provides much of the same functionality but trades some flexibility with the need for zero administration kinesis data firehose is a good choice when you need a fully managed service with tight integrations to other aws services often kinesis services are used together data streams to provide customized real-time processing and data fire hose to provide delivery to destinations kinesis data analytics provides even greater in-stream record processing capability through the use of sql or java applications we'll hear from mike later how homesite has leveraged the kinesis family and particularly kinesis data analytics and building their solution another key component to a real-time data architecture is providing consumers with the freedom to create actionable insights from the processed information through direct ad-hoc queries or to create rich dashboards in your favorite bi tools such as amazon quick site amazon athena makes it easy to query using sql directly where the data lives whether that be in a data lake in amazon s3 or other purpose databases built using amazon federated query athena is built on presto which provides access to additional functions operators and expressions on top of standard sql some examples include map or reduce functions directly in line with query execution or utilizing powerful regular expressions athena is tightly integrated with many bi tools including native integrations with amazon quicksite or through adapters to popular third-party bi tools to support even further requirements we make available jdbc or odbc drivers allowing you to connect virtually any data consumer to athena as queries become more complex and data sets increase in size performance tuning and query optimization are important building a partition scheme based on use cases for your data and your metadata store will reduce the amount of data scanned typical partitioning revolves around time horizons but can be tailored toward your specific access patterns for even further performance analysis you now have the ability to execute explain plans in your queries to better understand how your sql is parsed and underlying data is read you can use this information to better tune your petitions or optimize your sql finally athena is a fully managed and serverless service allowing you to focus on business objectives rather than administrative operations now that we've reviewed some common aws services for building data pipelines let's hear from home site mike you have taken these patterns and expanded on them in innovative ways to accomplish your business objectives i'd love to hear more about your solution thanks jared that's a nice summary of the challenges and the tools so what happens when we need to deliver a whole new insurance platform on an aggressive schedule and the entire data platform has to meet the same schedule oh and since this is a new platform we really don't know exactly what the data will look like or what kind of analytics we'll want to get out of it in the traditional approach we'd wait for the platform to be delivered look at the data generated during the test and then design the data platform and the downstream applications and the analytics but that's not good enough anymore now we need data platforms that expose data immediately in very early stages of development then rapidly evolve into scalable production ready solutions the fundamental building blocks are not mysterious the source data is json microservice logs which we ingest into kinesis data streams using a data routing component built around kinesis producer library then we process the data using a range of aws server serverless components in fact it's quite possible to stand up a real-time data pipeline using only kinesis data streams can uses fire hose lambda and s3 it's a simple pattern but it leaves a lot on the table we need data governance we need session management we need to trigger real-time business applications we need total schema flexibility and of course we need scalability and resilience so let's look first at how we get to a one-sprint delivery of something that lets us see the data coming off our shiny new insurance platform right away we don't want to wait we need to see how it looks so we can build everything else to do this we built a rapid data profiler using athena our incoming stream is message based because we've torn it down to key value pairs next we use a lambda message processor to write parquet files down to our s3 sync and catalog them for athena by sending the json log data on the stream as key value pairs we can use athena views to pivot the data and quickly build virtual schemas on top no matter what our data looks like how many keys what structure those keys have they just flow on the pipeline and we can quickly restructure it at the view layer to show whatever schema we need this means that as soon as the data is flowing we're presenting analysts and downstream application developers with the schemas they need to drive their development now that we can see where we're going a little bit let's plug in our session management solution we used apache flink hosted on kinesis data analytics and we'll talk more about that in detail in a moment then we add our data governance framework as you can see now we have a warm path the blue line exposing our sessionized data but also a hot path the orange line so we have immediate visibility into sessions in flight both paths leverage the same governance rules stored in our dynamodb at upper right and since our data is flowing as key value pairs it's easy to match them up to pii and data classification rules so we can hash redact mask and route them to the right schemas now let's go back and take a closer look at session management we need to know when a customer stops interacting with the experience so we can trigger actions to communicate with them and so we can package up the session data for analysis but it's not as simple as it sounds triggering on events is easy in an aws server-less environment but here we have to trigger when an event stops happening fortunately the apache project's flink has a session windowing capability which handles this neatly it uses a time to live based logic and runs as a java application kinesis data analytics for java applications will host our flink application with some pretty tight integration while we wrote our flink application in java currently both java and python are supported on version 1.11. so far so good we have data flowing and all this time importantly our developers and analysts have access to simulated schemas via our athena rapid data profiler so we're not flying blind while we're developing the rest of the downstream applications we have our session management in place so we can trigger our real-time business applications and enhance our data analysis capability and we have our data governance and pii treatment in place now let's take a look at how we expose the data on the consumption layer here we have data coming in at the left from our sessionized warm path and unsessionized hotpath each have their own s3 but let's split that s3 out further because we've classified the data by sensitivity at the key level remember we're moving our data on the pipeline as key value pairs so we can associate a classification with each key notice also we have a partition optimizer here which is running asynchronously because the parquet files generated by the pipeline are quite small and there are a lot of them this can be inefficient for athena access by periodically collecting them into larger parquet files we make the athena access patterns much more efficient for access patterns where we want dashboards that refresh automatically at say a 10 second interval we leveraged amazon elasticsearch in grafana in order to keep it super flexible because we hate having to do software releases whenever we can just push configurations we use a strategy of storing sqlite expressions in dynamodb this pattern has proven proven super flexible and has enabled us to respond to a lot of short fuse demands to surface real-time kpis for the analytical access patterns which you can see on the top path we're now able to create a very strong chain of governance and access control our s3 buckets are each associated with a specific data classification driven by our rules in dynamodb this makes it simple to use a bucket policy to associate an im role with each s3 bucket when our athena user logs in via our saml integration athena passes their im role right through to that s3 bucket at query time this gives us a direct chain of access control from the saml login through athena all the way down to the s3 storage all governed by our classification rules in dynamodb so it seems as if we've covered the entire data flow but i want to backtrack to a super important aspect of this architecture if there's one thing that truly makes me happy it's a solution that saves my team from refactoring code and changing mappings every time the source system schemas change i know source system schema data changes new products new features new keys we want this data to flow on our pipelines without refactoring and we want to be able to expose it in a secure way with only minor configuration changes by reducing the messages on the pipeline to a single key value pair plus the metadata needed to associate it with the right session and customer we're able to allow new keys to flow with zero changes if the key is sensitive we add the required pii and classification rules to our dynamodb table when we want to expose it we deploy an athena view change which takes minutes and we're done our athena views make heavy use of presto map functions to pivot the keys into columns and the values into rows along with any other business logic we need for rapidly changing schemas and evolving products this is a tremendously powerful pattern this has been a great journey and thanks for coming with me on it along the way we've learned a few things and we're hard at work and some major evolutions on this pattern here are a few of our lessons learned most efficient data access with athena requires some care we suggest running a background process to aggregate your files and also when you encrypt your buckets make sure you use either sses3 server-side encryption or the new bucket keys this is going to help you avoid large numbers of calls to kms for decrypt which can be a cost optimization problem when it comes to monitoring and scalability the kinesis flink solution gives you a lot of tools but it pays to test hard we built our own load generator and spent quite a bit of time dialing up the load until we broke it then looking at how it broke there's a lot of good monitoring including a new flink dashboard but it requires some careful analysis to get the scaling right when you build your consumption layer logic it's best if you can build in a dempotency basically you'll be happy if you can make your solution tolerant of duplicate data this will greatly simplify all your disaster recovery scenarios as well as making application restarts for software upgrades or other maintenance easier to manage lastly athena query language is powerful but not familiar to everyone the presto logic can be unfamiliar and challenging for cases where this will be your main data interface as opposed to bi tools layered on top some training is important now finally we arrive at the so to speak ice cream stand at the end of the journey what are we building next as we transitioned from rapid evolution mode into more of a steady state we saw a huge opportunity to improve cost versus performance for some of the more costly unbounded queries being run by our analytical teams our generation 2 architecture uses sns sqs publisher subscriber interface to move data from our kinesis pipelines to a redshift injector the injector uses the new redshift data api to insert each pivoted session in this approach we're pivoting each session as it comes off the pipeline then injecting it into redshift tables the team has developed some really impressive solutions to automatically expand the redshift schemas for new keys so we maintain much of the operational flexibility of the athena architecture while at the same time bringing the higher cost efficiency scalability and the more familiar query interface that redshift provides it's interesting to note that for the entire solution this edition of redshift is the first time we are adding persistent infrastructure the entire solution up to this point from ingestion to consumption is serverless and new instances can be created easily for new partner programs for load testing poc or any other purpose and then torn down just as easily when they're no longer needed at the same time all our storage for intermediate syncs as well as final schemas is super durable securely encrypted and cost efficient s3 so now we have a pipeline pattern that enables us to roll out a completely new data platform in parallel to a new business system rapidly evolve our schemas and build out our applications while development is in flight then move into production with a scalable solution that adapts to changing data with full governance in place so that's the ice cream cone at the end of the journey i'm tremendously proud of the homesite cloud data management platform team for bringing this innovative solution from whiteboard to production so quickly and delivering all these outstanding competitive advantages to our business jared can i hand it back to you what a fantastic story from homesite thanks for taking the time to share with us mike let's recap what we heard in this session you've seen how aws services can help deliver business outcomes to the power of real-time data you've seen a customer example showcasing their innovative solution in a real world scenario so what are some key takeaways first dive deep into aws service functionality serverless and managed services remove administrative burden by providing an easy way to get started quickly however don't confuse this ease of use with simplicity these same services offer a rich set of capabilities that you can take advantage of second put real data into the hands of your business early in the design process too often there is a disconnect between the business vision and the information available to them this creates disappointment and frustration between business and technical resources resulting in slower go to market lastly plan for the unknown change is inevitable being able to pivot and meet users where they are is key to maintaining speed and agility with business solutions before we end there have been a number of individuals who have worked tirelessly in bringing the home site solution from idea to reality a special thank you to the individuals that you see on this slide and finally thank you for joining this session

Show more