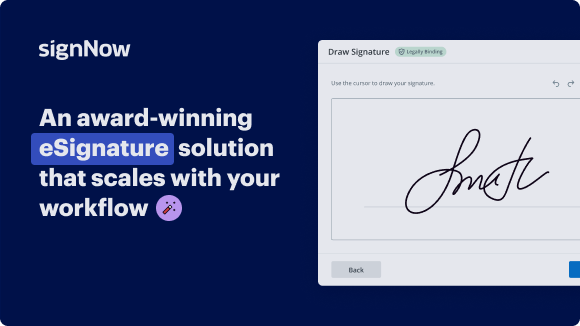

Streamline Your Business with airSlate SignNow's Lead Qualification System for Building Services

See airSlate SignNow eSignatures in action

Our user reviews speak for themselves

Why choose airSlate SignNow

-

Free 7-day trial. Choose the plan you need and try it risk-free.

-

Honest pricing for full-featured plans. airSlate SignNow offers subscription plans with no overages or hidden fees at renewal.

-

Enterprise-grade security. airSlate SignNow helps you comply with global security standards.

Lead Qualification System for Building Services

Lead Qualification System for Building Services

airSlate SignNow benefits include secure and legally binding eSignatures, time-saving automation features, and seamless integration with other tools such as Google Drive and Dropbox. With airSlate SignNow, you can increase productivity and efficiency in your document signing process.

Ready to streamline your document signing process? Sign up for airSlate SignNow today and experience the benefits of a reliable lead qualification system for Building services!

airSlate SignNow features that users love

Get legally-binding signatures now!

FAQs online signature

-

How do you automatically qualify leads?

How to Automate Lead Qualification: Step-by-Step Guide Step #1: Create a lead magnet. Step #2: Set up automated lead capture form. Step #3: Ask qualifying questions. Step #4: Flag your high-quality unicorn leads. Step #5: Deliver qualified leads to your sales team.

-

What are the qualifications for a lead?

BANT stands for Budget, Authority, Need, and Timeline—four critical criteria for evaluating a lead's potential to convert into a customer. Applying the BANT lead qualification strategy allows organizations to effectively prioritize leads based on their likelihood to result in successful conversions.

-

How do you qualify for construction leads?

Construction Job Leads: How to Qualify Construction Leads Step 1: How did the potential customer come to call you? (Evaluate your marketing efforts) ... Step 2: Review the Scope of Work (Do they really need your services) ... Step 3: Review the Homeowner's Sense of Urgency (Eliminate the tire kickers)

-

How is a lead qualified?

Lead qualification is the process of evaluating potential customers based on their financial ability and willingness to purchase from you. It includes assessing a lead's necessity to buy a product, finding out whether this person is authorized to make the purchase, and how much money they can spend.

-

How do most contractors get leads?

The most traditional way to get free leads is through word-of-mouth marketing. By providing top-notch service to your current clients, they will recommend you to their friends and family members looking for a good contractor.

-

What is the lead qualification system?

Lead qualification involves assessing the leads and comparing them against your ideal customer profiles to determine if they would be a good fit for your business. Sales qualified lead definition involves the following characteristics: Potential to make the purchase. Profile that matches your buyer's persona.

-

How do you get qualified leads?

How do you find qualified leads? Define your ideal customer profile. Use multiple lead generation channels. Qualify your leads with lead scoring. Nurture your leads with email marketing. Follow up with your leads promptly. Track and analyze your lead generation results. Here's what else to consider.

-

What is a lead qualification checklist?

A lead qualification checklist ensures that your reps always properly qualify prospects before investing significant time and effort into them. It helps new reps hit the ground running without falling into the common trap of juggling too many leads, including low-quality ones.

Trusted e-signature solution — what our customers are saying

How to create outlook signature

[Music] hello and welcome to this uh new um fireside chat it's now the seventh or sixth we have this year talking about all things considered AI today we have uh two guests uh Kasten and Tomas and today we are talking about accountability and AI my name is Willie fitus I'm the global head for strategy and Business Development here at SGS and that said I would like to give Casten the opportunity to introduce herself uh and after that Thomas love hi thank you Willie so my name is Ken um I work as a legal council at no Center which is a research center for trustworthy AI that is based in Katz which is in Austria and I did my studies in law and also my doctoral studies in law in Katz focusing on artificial intelligence and its impact on panel law and I'm the general legal council of no Center and the data Protection Officer and I also do research in the field of AI systems and law especially focusing on terms of accountability and so I'm very happy to be part of this fireside chat today thank you K thomasl all right thank you I'm thomasl I'm part of SGS I'm a lead Innovation technologist and I'm focusing on emerging Technologies and security and trustworthiness in general of it and since would say over two years now my focus topic is AI and how we can ensure that it's safe and trustworthy and what we have to do in order to help our customers um in supporting to make their AI developments secure and trust worthy thank you thank you so accountability what what is meant by that what what is this term actually telling us well um to start with I think accountability is actually a very broad term and generally speaking accountability uh means that one is actually responsible for their action and responsibility also includes that there are consequences for special actions and this means the people must be able to explain their aims their motivations and their reasons for taking certain actions um and in terms of AI systems I think we can Define accountability as kind of the people have to reflect certain expectations in terms of using developing and designing AI systems so all the people along the AI life cycle have to think about their actions and how they're interacting with the systems and of course they also have to be certain consequences for these actions like findes if there's non-compliance with certain rules or regulatory Frameworks uh and I think um we can also look at the definition of the European highlevel expert group on artificial intelligence which also tells us that accountability is um closely related to other fields such as for instance risk management or even auditing in this field so I think when we talk of accountability there are so many factors that have been taken into account um to assess accountabilities in these terms very good so when I'm thinking about that uh there's this is one case where a lawyer here in the states uh filed um legal complaint against an airline citing two non-existing cases and even referring to two non-existing Airlines because the guy apparently used jat GPT for inspirational purposes I guess that guy should be accountable for his noncompliance yes I think that's actually a very good example because this guy actually used jbd without further testifying what this um large language model told him so I think this clear example of how an end user of an AI system could be accountable if he uses AI in a non- trustworthiness manner yes yeah I think there should be something like an an an asteris you know some kind of disclaimer I mean to be honest the other day I um submitted an um proposal for talking at a conference and yes I use jpt for inspiration but I also think it's necessary then to say esteric you know uh in ation taking from J GPT uh and nobody can then say hey Willie was cheating or nope it's totally transparent and if there is some some stuff that is not acceptable I take responsibility um but that brings me then to to the question why why do we think that accountability is so important When developing deploying and in this case using uh AI systems I mean computer systems have been around for some time now right and and how often have you heard oh computer says oh computer is not available and and people just blame that that thing that is not there but not taking responsibility and and not taking accountability so why do we think that with AI systems that will change uh yes I think you already mentioned a very good example because you're using Di I system and the I system might generate an output you could not think about so probably the lawyer was not thinking that this two cases were not true at all that this airines didn't exist and he just didn't check but he relied on the output of the EI system and I think that's very very there are very severe risks to this because AI systems are kind of operating in a way that might not be transparent to us and they might be kind of OPAC because of their technical features and lawyers or other people which are not technicians might not know how these systems actually work and they might think that AI systems are much safer than they actually are um but on the other hand I think that these systems have numerous benefits so for instance you use them for your presentation and they can make our daily lives much more easily because we can use them because they are beneficial and a lot of systems will make our daily lives even safer so for instance if you think of semi-autonomous cars for instance they are designed to actually make the traffic more safe because they do not make like um careless mistakes people do and they would not drive drunk on the streets and so there might be less traffics because we're using the systems uh and I think an accountable use is very essential to this so we can use the technique in a way that's beneficial for all of us would also like at a little bit from a perspective of a company developing AI systems and what we see a lot is that it's not clear who is actually accountable for what and if you look at the typical AI system manufacturer who is building a larger system and buying maybe AI components from different suppliers which need to be clear who is actually accountable then for the end result so if I'm the one building my product integrating all the eye components am I responsible for the output of the eye or what is the uh accountability of the supplier Who develops maybe or delivers the data to the ey system and what how how accountable can be the the one who trains a specific model and which terms or in which way I'm accountable basic putting all the pieces together uh and then putting a a product on the market I think clarifying accountability is quite important and that's uh it's a very important topic to be discussed yeah I think that in the future we will have something like um user manuals or uh warnings like we have with the microwave opens that say you know do not dry your cat or do not boil eggs or or something what what you know some stupid people on a regular base do we will have the same thing I believe for AI systems the the real question would be obviously who's reading that the disclaimers and and how can the developers the designers uh the the companies that bring those systems into the market um cover their responsibility while at the same time the end user might do something really stupid um so how can we establish accountability in terms of an AI system yeah I think that's a very good question and I think it's big problem that end users might not follow the instructions that the de developers actually give them and I think what's very essential to this is the involvement of multiple stakeholders uh as soon as possible so if possible already in their regulatory process so if only National governments and international organizations think about accountability in AI systems I think this will not be enough there must be a kind of an informed discussion between all relevance they holders also including technical experts but also the Civil Society so Civil Society will probably gain more trust in these systems and will gain knowledge of AI systems and I think that's why AI literacy is actually so important in this regard so for instance the AI act in the European Union tells us that AI literacy must be ensured if company use AI systems with within the company and so all the employees have to gain efficient and um knowledge of what they are using and what AI systems are and so this is very important because otherwise people will not trust these systems and they probably will refrain from using a highly beneficial technology or they will use it in a way that's wrong and AI systems will then cause harm because the end user didn't know how to use the system in a correct way so does this mean that in the future we may have something like an a pilot license for using AI or a driving license for the authorization to use AI I mean let's face it we have examples that in some areas the use of Technology vehicles and ships and planes require some kind of Professional Licensing of the users can can we see something like that happening in the AI world as well well I actually think that this is um not that likely because AI systems are designed in a way that's very let's say a different way of using AI systems so you might still need your driving license for driving a car but you might not have an a license for using an algorithm that can be used in different contexts like in large language mod model for instance um but I think what will happen is that companies might provide AI systems that end users cannot use in a wrong way so let's think of kind of like the GTP knows privacy by Design so probably there might be something similar in AI systems too so I cannot use a large language models for instance um and customize it after its deployment on the market I also think it will probably not go into the direction that we will kind of need to uh have a sort of license even specific areas for using AI since one hand kind of support of AI is the idea that it takes away from us from the human being it's more going to autonomous uh systems that they are more acting without our interference basically and uh on the other hand um the as as C was saying the companies are already trying to develop systems in a way that they cannot be basically misused in some form by the end use at the end and the regulation part we take care of that they will basically say what companies need to provide and what kind of information for the end user so that he's informed enough and then you just need to hope that the user actually reads those stuff and the trainings are actually done and then uh everything is going in the right direction I think that this might be good intention but I also remember a sent that I heard many many years ago and this was why can systems not be designed in a way that they are idiot proof well the answer is very simple idiots are actually very Innovative and you know that's I I think on a on a regular base when we are using tools whether this is an oldfashioned Hammer a table saw or I don't know a laser pointer or an AI system they can be used unfortunately also in in in bad ways and and that leads me to the next question who is actually accountable for damages caused by AI systems would it be the developer the company that brings the product into the market the user the user if he or she is doing something very stupid or all of them yes I think that's also a very good question um and if we look at the different stakeholders I think actually all of them can be accountable so just let's look and at an very easy example so for instance if the EI developer develops an AI system in a way that's non- compliant with rules he or she will be responsible for this AI system um and we also have to think for instance of data providers many AI systems rely on huge amounts of data and they're using training data to generate out puts so if this training data is biased for instance the data provider is clearly accountable for this biased data so just let's look at an example if I use a training data that is flawed for a medical health care algorithm and this Healthcare algorithm um um leads to a a wrong um um a treatment of a patient the patient is clearly harmed and so the data provider is liable and also accountable for this and there are numerous other persons involved like the end user who might not follow the instructions of the developers or even the the vendors and suppliers of AI systems so I think accountability can be very hard to Define and it also can be kind of shared or distributed accountability and we can say that both the AI designer developer and the user are are accountable because both of them did something that wasn't wrong in a specific regard yeah that that reminds me uh about a concept that is uh quite well known in cloud computing and this is called shared responsibility so translation there's also something like shared accountability right I mean it's it's not just that that different parties are responsible for something but it's also a shared accountability where as a as a team as a group of people or organizations uh everyone involved has some kind of well responsibility and should be accountable uh Tomas any any comments from your side uh I think that's that's exactly the same what which happen with the is systems that there's a shared resp shared responsibility and I think the cloud computing part is a very very good example since it's that from a technology stack it's still very simple right you have a lot of cloud infrastructure running the AI models and tools Etc you obviously have products which have built in algorithms for doing stuff but at the end uh there must be an agreement between all the involved parties who is uh who is responsible for what and in a cloud computing industry that has been established there was I don't think not a regulation necessary to establish that there's um there's an agreement on that certain things are respons responsible and and certain things the provider is accountable certain things the model developer is accountable certain things are by the one who uses the systems um accountable so something here needs to be done for the I system as well the regulations like the will help with this regard so that they make clear what type of roles are there and what UH responsibilities they have in terms of be compliant the regulation but at the end when it's about harm happening twist and at the end responsible need to make sure that everybody uh in the valer chain is aware what his responsibility is what he can be accountable for and uh that he then acts ingly yeah um the the example I'm uh referring to is obviously from you know the the classical oldfashioned cloud computing uh relationship between the technology providers and the users on on a regular base it's the US user uh who is not uh knowledgeable of properly protecting uh their uh their usage and and all of a sudden uh all kinds of files are openly available uh if if you know the um the URLs right and I can see in in a similar situation that the AI provider is doing everything they should do must do but then for whatever reason the users are doing something that is out of stupidity and and out of neglect not done properly and that go back a little bit of bit to that that driving license concept right but please go ahead but since we got the eyes got open by large language models and chbt and all the similar tools out there which are very powerful tools which can do a lot of dangerous things in in in uh in the hands of dangerous people right so what the companies are trying to do the the providers of of of those large language mods and and different chat um different chat applications is they try to um basically sensor the output make sure it cannot output any harmful stuff cannot give you instructions how to build a bomb does not give you write your code to exploit Venus security vulnerability and anything like that but at the end there bit cat and mouse game because uh people they're putting guards in place and others are basically seeing how they can cument of guards and now can exploit it again uh so here there need to be some some set of rules to make sure that the provider that's the responsibility of the large language mod provider at at the end there's also the end use is responsible not to use it in a harmful way so even if it's a simple rule saying don't use it in a harm away don't use your basically weapon to kill a people uh the same can be done in in a sense for large language models right so there's powerful tool which can be used in a harmful way so may we need to have rules in place we just say you're not allowed to do otherwise you basically get go into jail yeah I uh I remember that a couple of weeks ago I actually tested one of those large language uh models and said um so what are the latest zero day vulnerabilities of systems and it said something along the line cannot really tell you that however you might want to investigate the following websites for hints and and clues right and then I said okay I found that website very helpful but how do I ascertain which systems are out there that are actually still vulnerable to those um zero day vulnerabilities and that's again I cannot really tell you that however here's a couple of websites you might want to investigate and something like well it's actually a good teacher I mean good in terms of the capability and ability to teach me something bad teacher in terms of actually pointing me to very very interesting websites but does this mean that we need some regulations some laws specifically for AI systems or or do do you guys think that the current legal environment is sufficient well I actually think that this is a very hard question too because if you look at AI systems it seems that we need regulations for them because they might point us to websites or teach us things that are wrong or they might discriminate people and we see severe risk the systems can cause and I think that's why a lot of policy makers all around the world are now proposing new regulations and Frameworks for AI systems and they argue that the existing rules are insufficient for AI systems because they do not cover um the unique characteristics AI systems have because AI systems act in a kind of opa way and they might affect a huge amount of people so during the last few years we could see a numerous approaches for new rules on artificial intelligence systems with the best example the European Union where the EI act which tomislav already mentioned was um proposed and will enter into Force soon so the European commission saw a clear need for regulating AI systems laying down um rules for high-risk systems for instance which include specific transparency obligations or risk assessment audits but on the other hand we can see different approaches so for example in the US we cannot see see this hard law approaches the European Union follows so in the US um there are more or less soft law principles which are non-binding standards and which the US asks um the um people to follow but which are not binding so I think it's not clear if we really need new rules or if the existing ones are enough to meet artificial intelligence but I think we will see in a few years because all of these regulatory approaches are still evolving and we do not yet know how practical they actually areh and and let's let's let's be brutally Frank um bad people don't really care about laws and thanks thanks to the Internet it's actually the entire world right so you know there might be a wonderful set of regulations and laws let's say in the European Community but hey hey I'm sitting in the US and I have access to servers in South Africa so who why would I care about what what the EU says right so that's definitely something that that is a serious issue but toas what is your take on that yeah so um I think the eui ACT is a very important piece of leg of of Regulation now on the table now approved and it will be coming to force soon um people often think that's maybe it's too much to have so much um so much rules on using AI I always say if you look at AI it's it's a product safety regulation and that's not the the first product safety regulation we you we have regulation similar regulation for medical devices for uh industry machines for cars so uh it's the same thing just more that it's horizontal meaning that if you use AI systems in a high-risk application so anywhere where we really can harm people or fundamental rights or anything um listed in the regulation basically then you need to uh comply to certain additional requirements basically so it's not nothing not entirely new looking from a product safety part it just uh picks out the very specific type of technology which can be applied in all kinds of all kinds of IND Industries I believe there might be something like the Brussels effect for EU act as well meaning that EU you know usually very good in regulation uh other countries in the world see what works well and what doesn't and then basically adopt certain parts of the IR regulation other areas as well I do believe that there will be more regulation around the globe uh in in other in other industry industrial countries I don't think that the UI will be the last one yes um and and you know to to your point the Brussels effect is definitely a very strong effect that um from an us perspective I I I need to say unfortunately many US companies ignore that and say something along the line ah yeah that's that's just in Europe but uh at the end of the day yes it it's affecting companies around the world what is being decided in Brussels and and the rules and regulations coming out of there uh Market the market is huge right so everybody sells in the in the on the U market so the the effect it's not it's not just the regulation not just affects the European Union but all companies trading and selling products basic in AU as well yes yes uh and and let's face it um even if you are a contact center let's say in the Philippines or in India providing services to European companies well you somehow are affected by the rules and regulations that are coming out of the EU um so this is also the time where I would like to ask the audience to provide uh questions because at the end of this fireside chat we will have uh an area time uh slot allocated for Q&A uh so if the audience has any questions please feel free to put that into the chat that's fantastic uh but let's let's continue with my next question so what what do you think uh is is the role of standardization and you know in this particular case that could be ISO standards for example but it also could be other standards uh bodies that come up with some kind of rules and and standards do do do you guys see a an impact a benefit there and and what's the current situation well I definitely see an impact of standardization in terms of AI systems because standards might not be enforcable and non-binding but yet they are kind of providing guidelines and benchmarks for a safe use of AI systems uh and I think standards are very important because they can ensure or enhance interoperability on a global level and lead to kind of harmonization so even so they hard laws like the ACT might not align with other rules around the world there might be standards which apply globally and so they can um enhance this harmonization so for instance if there are standards on data formats for instance they ensure that the company using the standards can interact with each other and this that the developed algorithms will work together kind of seamlessly if they're using the standards um and I also think that standardization is very important because it kind of accompanies hard law regulations so just to mention another example um the European commission asked the European normalization bodies ten and selc to adopt so-called harmonized standards that will accompany the act because the ACT um asks for certain transparency obligations or documentation obligations but it doesn't tell people how these obligations can be fulfilled in practice and the standards will Define the current states of art people have to adhere to and if people are following these standards there's a very strong presumption that they are acting compliant in this regard so I think that standards play very important roles in terms of systems yeah fully agree so standards are the key for for really for trustworthy I system but also for complying to the existing and maybe upcoming regulations if if AI development company uh and you now need to comply to the U act the question is okay what do I need to do to be sufficiently compliant to the U act the act itself has couple of requirements obviously but they are not technical enough that you say okay we need to Implement step one to 10 and then you're compliant right so it's up to uh either to self assessment of the companies or if it's a third party assessment to companies like uh like SGS and our competitors who will need to assess the systems and we always came can came up with some proprietary assessments methodologies uh but at the end standard are a way to go where it's clear what we have to do what I need to implement in order to uh fulfill certain requirements to be basically fulfill best practices and then for it's clear for everyone what they actually needs to be done yeah I must admit I'm I'm bias uh I'm a standard guy for for the overwhelming majority of my professional life uh and uh in the interest of full disclosure I'm also sitting on um a couple of iso subcommittees and Technical committees uh helping to uh write and and design those standards uh and and yes uh you know when when I'm talking about the benefits of Standards I'm usually using just one slide and this is you know something like 4x3 Row three Matrix 4x3 Matrix displaying the 12 or something 15 different power outlets we have around the globe and then I say so this is exhibit a of why we do need standards um you know for everyone who ever travel internationally and and uses these suspicious adapters uh that are hopefully working um knows why why we need standards and again in the interest of full disclosure uh there there were two hotels where I uh blew the fuse because of those adapters that didn't work apparently so um yes there there are a couple of interesting um uh standards in the making uh one is is obviously the 4201 which is the management system standard uh for AI uh systems um which provides the governance framework uh but there's also a a newer series of Standards coming out the 5259 uh series of Standards which is talking about uh data quality for analytics and machine learning so uh the audience is is encouraged to fize themselves with those stand that's because uh they are obviously providing the necessary Foundation uh to to ensure that well there is some kind of interoperability there's some kind of exchange possibility between the different systems and at the same time providing a governance framework for using AI systems um but that brings me sort of kind of to the question if we do something how do we know that it's okay that it's good and and that means how do we measure whether you know our AI systems are trustworthy that can be that it can that we are accountable is there some kind of measurement system that allows companies or organizations to provide some kind of metric to demonstrate how account they are some kind of an accountability index well um looking at the literature I found that there's actually not a single tool that will ensure that you're acting account accountable if you adhere to this tool but we can see numerous approaches in this field so for instance there are checklists and toolkits um suggesting different questions like um is there effective redress if anything goes wrong are there clear rules and so on and we can also see that there are some contextualized Frameworks for assessing accountability so for instance you can look at accountability within an organization for instance or on accountability in terms of Public Services or even in special technical Fields like accountability when using robots for instance that rely on AI systems and you can also assess accountability from a qualitative perspective um for in you could um tell and look at the fact if AI systems take human rights or fundamental rights into account um and all this together it's very hard to assess accountability but I think there are some metrics that should be considered when assessing accountability so as already mentioned I think it's very essential that there are clear roles and responsibilities within certain Frameworks like within a company um and I think it's also important important that there is kind of AI literacy Within These Frameworks and stakeholders because if people do not understand the technology they cannot use it and they do not know how to use it in a way that's trustworthy and this again leads to kind of documentation effort because I have to document what's going on with AI systems and how are people using these systems um and I have to do certain risk assessments but also um ER and incident reporting within a company for instance because I need to know what's going wrong and how and what are the consequences of this so I also need clear consequences in case something goes wrong and an AI system causes harm for instance if there is discrimination because employees in human relations uses algorithms that discriminates against certain people um and yes I think also audits are very good uh indicator for high accountability because internal as well as external audits will tell me if I'm really acting accountable and there is kind of thirdparty check that is also telling me that I'm acting in a way that's accountable thas love nothing to add here was all great stuff so are we are we going to see in the future something like an um sustainability report for AI accountability you know many organizations are now publishing sustainability reports telling the stakeholders and shareholders and interested parties how good they are doing in protecting the world and and the environment which is I think very important indeed but are we going to see something similar in the future with regard to AI usage I definitely think that we will do so because the AI for instance requires risk assessments and I think this risk assessments um include many of these um metrics I was just talking about so you have to Define roles you have to Define certain consequences and documentation and transparency obligations and so on so I think it will be required to do these checks within certain companies for instance and I think go ahead there um I think that's nothing new because if you look at the ggpr the general data protection regulation in Europe for instance there are already similar obligations so you have to document certain processes within your company if you're processing personal data and I think this is a good example for what will happen with AI systems too I believe as well and we also might see something similar like the sustainability reports big tech companies are already publishing on a website how responsibly they using Ai and what they are doing to make sure that everything is safe and trustworthy and I think if you uh it will be important piece to be done by any AI development company if they're developing certain types of application that they really can establish trust to their customer base as well by really being transparent enough and showing what what they are what they're all doing in order to make sure that the system at the end is trustworthy yeah um Thomas love refresh my memory is there a white paper uh about accountability that we have published yes we had published uh together with Ken and colleagues from no Center we published a white paper called accountability it's on part of our trustworth n of AI series and you can download it on this link yeah so uh let's keep that slide up for a couple of minutes for the audience to make um notes and um couple of more questions from my side so why is it impossible to consider accountability in an isolated way or can we see it isolated or should we see it all encompassing with with all the other themes related to AI yes I think that accountability serves as kind of a meta principle to all the other principles of trust wory so to give an example I think that transparency is clearly um needed for accountable use of AI if I do not know how an AI system is working or who designed developed or used the AI system I cannot address accountability at all so transparency is kind of a prerequisite for accountability and I think the same goes with fairness if an algorithm discriminates against people or is biased in any way people will have to be accountable for this unfair um algorithm so there's a clear a strong independence of accountability with the other key requirements for trustworthy Ai and I can never consider accountability as isolated exactly ey systems are complex and there are many requirements and many uh scientific domains contribut to it so it's just not possible to just look at them in isolated way yeah I I like the the expression uh meta um uh uh I I would have said umbrella but I I think it comes down to the same thing you know whether it's it's an umbrella or whether it's the the measurement about the measurements um that's a different story but at the end of the day uh all of these other uh factors we discussed in this series of fire side chats lead to well accountability some somebody needs to say yep that's us who who is doing that um so what are the biggest challenges when thinking about regulating AI or establishing accountability what are the biggest let's say I don't I personally don't like the word problems because problem is is a negative word so challenges I think is the better word what what are the best what are the biggest opportunities uh in in regulating AIS well I think we have already talked about this uh previously so AI systems are on one hand kind of OPAC for non- technicians so policy makers might not understand the techniques they're actually regulating at the time so I think one of the let's call it challenges is that policy makers have to be aware of the technical components of AI systems and I think it's very hard to explain how AI systems actually working so for me it was not easy to understand how AI systems are designed and how they work um and I think that's a great Challenge and we also talked about um the global aspect so standards rules and Norms have to align on the global level and I think that's very hard too because people in different regions are following different ethically standards or different rules so it's very hard to find one standard that all people are happy with and they can comply with um yeah and I think think this brings us to AI literacy and the stakeholder involvement again because I can only create sufficient rules for AI systems if I involve numerous stakeholders all around the globe from National governments to technicans as well as the Civil Society to create trust in the systems and I think that's a very very big challenge yeah I I think you you mentioned the the the aspect of Ethics right and and obviously unfortunately ethics is not necessarily Universal in different parts of the world we have different ways of seeing things in whatever way that might be not consistent from country to Country and and that's okay but at the end of the day AI systems need to be considerate of that and need to take that in in account uh but looking at at the clock uh I would like to ask one final question um and this is uh H how can rules be transferred into practice uh and and when do Norms address uh how we can comply with those rules but that seems to be very vague and at the same time not really harmonizable for a lack of a better word on a global level H how can we address that um for me that's also a very challenging question I think it's hard to to um of create this legal sity people would need but I think there are some ways that can help people so for instance following the regulatory approaches or looking at websites of the European commission or other policy makers for instance who will provide kind of a simplified um overviews of what the legislation is about and there are also the white papers like the one we wrote who try to summarize the key facts and findings and will provide further links for deeper research in this area and you could also follow standardization which also provides kind of simplification in this regard and I think people have to take all of this into account to get an informed view of what AI systems are and how they work and how can we interact with AI systems in a trustworthy way to yeah so I think we require more guidelines and the European Union also need to provide basically more guidance in this respect and we always need the standards in order to actually know uh what how we should what we need to implement to comply to this rules I also would like to refer to uh the European testing and experimental facilities not true have stumbled upon that they basically established by the Au to uh for example there's the testing experiment experimentation facilities for health where basically they should help provide ERS of AI technology for the healthcare sector to bring their solutions to the market by faster by providing testing and validation services around the I system so there are certain Frameworks and construction the uh European Union is providing to basically have a test ground where we can test things out and then apply and see how we can validate uh those requirements and um something like that will be definitely required also for different areas excellent okay I was lying one more question um close your eyes mentally and think we are now in in the year 2030 six years from now what what do you think we have achieved by then in terms of accountability well hopefully we have some clearer guide like then now and people know what to do and how to interact with AI systems I do hope as well maybe there were already a couple of lawsuits which tried to figure out who was responsible for what incident toe we will have more more practical experience with the situation I I think it's not unavoidable yes lawsuits and fines no no question about that and that will then establish um expectations um that are going a little bit beyond what the rules say but that's okay so that said um in the interest of time uh kren Thomas love thank you very very much for your time um and a big thank you to our audience I hope you enjoyed it and looking forward to the next time on this channel thank you and bye-bye e

Show more