Streamline Your Workflow with Pipeline Funnel in IT Architecture Documentation

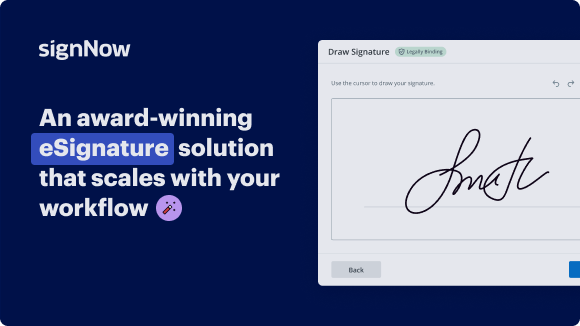

See airSlate SignNow eSignatures in action

Our user reviews speak for themselves

Why choose airSlate SignNow

-

Free 7-day trial. Choose the plan you need and try it risk-free.

-

Honest pricing for full-featured plans. airSlate SignNow offers subscription plans with no overages or hidden fees at renewal.

-

Enterprise-grade security. airSlate SignNow helps you comply with global security standards.

Pipeline Funnel in IT Architecture Documentation

Pipeline Funnel in IT Architecture Documentation

With the ability to easily navigate through the document signing process, airSlate SignNow simplifies how businesses handle their documentation. By utilizing the features provided by airSlate SignNow, businesses can increase efficiency and productivity within their workflows. Try airSlate SignNow today and experience the benefits firsthand.

Sign up for a free trial of airSlate SignNow now and see how it can enhance your document signing process within your IT architecture documentation.

airSlate SignNow features that users love

Get legally-binding signatures now!

FAQs online signature

-

How do you make a conversion funnel?

The following eight steps will help you turn more of your visitors into leads, and more of your leads into customers. Map out your ideal buying process. ... Set up your conversion goals in Google Analytics. ... Build interest with content. ... Identify leaks in your website conversion funnel. ... Optimize for conversions.

-

How do you make a funnel diagram?

Insert a funnel chart in Outlook, PowerPoint, or Word Click an empty space in an email message, presentation, or document. Click Insert > Chart > Funnel. The funnel chart will appear. ... To add the names of the stages, right-click anywhere in column A, and then click Insert. Click Entire column, and then click OK.

-

What is a pipeline funnel?

A pipeline is the sales rep's view and process to close the deal, while the funnel is the customer's view of their buyer's journey and the phases they pass through until they decide to buy.

-

How do you make a funnel?

0:08 0:26 Something like this plastic bottle cut off the top and place it on whatever you need to strainMoreSomething like this plastic bottle cut off the top and place it on whatever you need to strain something through. This plastic bottle is your 5 cent funnel.

-

How to build a funnel on PipelinePRO?

How Do You Set Up a Funnel with PipelinePRO? Step 1: Go to the Dashboard. PipelinePRO's funnel builder is powerful yet easy to handle. ... Step 2: Click + New Funnel. ... Step 4: Set Up Your PipelinePRO Funnel. ... Step 5: Click + Add New Step. ... Step 6: Go to the Builder and Start Building!

-

How do I create a new funnel?

Step 1: Define Your Target Audience. The best way to start funnel building is by having an accurate sense of your ideal buyer. ... Step 2: Create Awareness. ... Step 3: Generate Interest. ... Step 4: Capture Leads. ... Step 5: Nurture Leads. ... Step 6: Convert Sales. ... Step 7: Retain Customers.

-

What is the difference between prospect and pipeline?

A sales pipeline is a set of stages that a prospect moves through, as they progress from a new lead to a customer. Once each pipeline stage is completed, the prospect is advanced to the next stage.

-

What is the structure of a funnel?

A funnel is a tube or pipe that is wide at the top and narrow at the bottom, used for guiding liquid or powder into a small opening. Funnels are usually made of stainless steel, aluminium, glass, or plastic.

Trusted e-signature solution — what our customers are saying

How to create outlook signature

you hello this is Sean Barrow from Latics thank you for for attending our webinar today as many of you know I'm the Director of Sales and Marketing here at Latics and today I'm very excited I'm because I'd like to introduce our new partner verify to you they've been doing some very interesting things in terms of Latics and DevOps they have a real focus on software architecture and you know continuous integration environment to improve software testing builds and productivities today Frank Waldman and Neil Lang me from from Vera phone will be walking it through this approach so during the webinar if you have any questions feel free to put them into the chat window and or actually the question window and I'll Wilson save some time at the end to answer those questions so with that I think now I'll hand things over to Frank to introduce you to verify hello everyone you may remember me some of you from my time with Latics as a as a co-founder I'm here today with Neil Langman who's my co-founder in verify a little bit about verify we are relative with the new company we've been engaged in projects in China and in the US and now in Europe as well and so our focus and vision is on building better software we have a very interesting and unique version of DevOps that we're going to show you today and basically we're helping companies innovate and automate a lot of the common tasks around building software with high quality so you all are familiar probably with this graphic which shows the you know continuous loop of all the activities required to develop and build software and you know it's it's a indicative of all of these DevOps pipelines that contain a high level of automation we're focused on removing the barriers between desmond ops and because companies have distributed software development teams DevOps is really the scaleable way to to implement the processes that you need and it's not just just processes that's it's it's actually everything involved in quality testing the people tooling all the activities that go around an integrated DevOps so for us though we have a fairly unique approach to it we think we have an architecture focus and that's why we're so excited about our partnership with with Latics we see opportunities for using Latics in many of the of the steps involved in in a DevOps pipeline so we're going to focus on that today just just to elaborate for you though you all are familiar with using Latics in design in fact many of you are architects and and you're well aware of the power of Latics to help you understand and define your architecture and some of you have have implemented the dependency rules that help you enforce that architecture while people are coding and verify we've taken it a little further and shifted this to the desktop so that developers as they are creating dependencies have the ability to see you know a bad dependency and better understand it as they code so their architecture aware and then for building the software we found a great opportunity to help people modularize the system break those dependencies which complicate the building process and we call that the build and then finally in testing you know I know many companies have invested a lot in unit testing and integration testing and it's always a real pain to do it it's often difficult to know even where to start testing and we've come up with some really interesting ways to use the architecture to help inform the best way to prioritize that testing and in many cases to even optimize it of course why do we do all this you know we were trying to build value so architecture compliance is only valuable if you're able to build stuff that are faster and cheaper so that's our focus obviously it's an investment you make in in a pipeline and so you have to show that return on investment and we'll touch on that today and then finally there's no time like the present right you know you don't have to boil the ocean all at once you know you can take a series of steps to build out this capability and that's what we've been doing it verified to help companies do that we're a we're a services company we have expertise in all of the tools necessary to achieve this kind of a pipeline with that I'll turn it over to Neil good day everyone as Frank and Shawn have already said we're excited to show control attics in a DevOps environment and to show some real-world experience of applying this in the pipeline setting so without further ado I'm going to jump straight into lattice architects firstly and just give a very short refresher on the information that's contained in in a DSM a dependability dependency structure matrix and then how that data can be used in a pipeline to drive better results to drive faster delivery in the software to create these automated delivery pipelines of our software and to ultimately achieve shorter delivery and release cycles which is one of the purposes DevOps so by way of a mind I thought for many of you this is a dependent dependency structure matrix in Latics I've loaded the the visualization of an open source components called I so page either ISO AG live is an open source embedded system and there is on online there is a good description of the system architecture for ISO AGM and inside this component you can see clearly there is a design intent and layering intent that's communicated by the system architects and for example if I hover over the available architecture description you'll see terminology such as optional optional need to be included modules and some modules are mandatory parts of the isolated live library so this clear architectural intent communicated in these diagrams and pictures but how does the real architecture of the system look if a module should be optional then there shouldn't be any dependencies to that module because otherwise it will be mandated and required to be possible system and you can see clearly in the communication there there are many optional modules if I look at horizontally across the architecture so with the original plan in mind we see if we load up the architecture of the system that there are discrepancies or differences between the intention of the architects and the implementation of this software for example you can see clearly that there are couplings between the components have comm which were as you remember optional modules so we see that in the DSM by but if I can remind you the dependencies or the the numbers in the columns are using and the numbers in the rows are used by so the way we read this is that the input component here inside comm is using can ten times its using singleton dot H twice and alternatively if we read across the diagonal will say see that network management part five is used by component two which is a controller clients of 37 times and what we've been able to do up until now is as you very familiar with this is to put these rules and design and tenants inside Latics dsm and so clearly you can see there are war violations here because the actual architecture doesn't match the intention of the architecture and that's clearly demonstrated but our thinking at Vera was well how wonderful would it be if this information wasn't and just served up at the system level but it was actually communicated earlier in the lifecycle inside pipeline and communicated and pushed to the developers so that we get this information of a full violation much earlier in the process and that's where we came to in our research and development to the idea of TX whereby we define certain time points in the software development lifecycle we say t0 is the earliest point in the life cycle where we can be mediate an error such as an architectural rule violation and that's typically inside developer desktops t1 would be at the integration phase when we're integrating certain components and then we can define t2 and t3 as higher-level phases at different branches for example at the integration branch or quality branch as we go further towards the delivery of the software so this is our DSM and we want to put this into a DevOps pipeline to enable the automatic automated enforcement of these rules at these various time points now traditionally also with tools like Latics they've tended to be installed on single developer desktops or maybe on a client-server architecture from a USB stick that kind of thing in DevOps we have the wonderful concept of infrastructure as code we can provision things like tool installations as code and as part of that we can abstract the installation away from development and from the burdensome configuration of tooling and the running of services and the maintenance that others typically associated with with configuring and learning and maintaining it at all so what we have done is we've converted the tools inside a DevOps pipeline and provisioned everything as infrastructure as code just by way of reminder what should a DevOps pipeline watch is the attributes of a DevOps pipeline B well firstly a pipeline should be consistent it should be trustworthy it should be resilient so if a pipeline goes down we should be able to self-heal and the pipeline's should be able to restore itself when things go wrong and it should be able to do so quickly and automatically we've all been in a situation where a local machine crashes and it takes so long to reboot or so long to reinstall programs and if your if your laptop server crashed you'll know that pain particularly well and it's true also in a traditional setting if you have tools on the server and the servers go down they cause many many man hours of downtime so resiliency isn't keeping ability to self-heal when things go wrong provide a quick turnaround and maximize the uptime the Devils pipeline should also be consistent it should become consistently deterministic it should be reproducible and it should be trust of all and that's one of the benefits that code gives us if we implement a DevOps pipeline as code then be running that code in a consistent environment we'll deliver the same results each time and the third thirdly so there's consistency there's resiliency the third point would be scalability you're probably in situation that we are encountering many times as the code complexity is increasing it as exponential and often dramatic rate we're seeing software complexity going up and up and up and as that goes goes on testability of the system gets more difficult because the lines of code is getting higher than nothing as a path through the software are getting higher many of our customers are working in a functional safety environment to work to software standards such as deal 178 B and the FDA where you have to prove 100% code coverage 100% unit test compliance so the question is how can we test in these ever more complex situations and again we were able to see that the DSM fanatics was able to provide use cases and data that helped our testing workflow so for example in this DSM you see which components are linearly independent from each other what we can then do is we could abstract them or paralyze the testing and the building of these components independently so for example task controller clients file server clients they're independent of each other we could give the testing of these two components to two different teams knowing that they wouldn't need to interact with each other so we have this data that we can use to help with test prioritization also if you're struggling with long build times we can use this to build in a smarter way and we have a concept called smart build whereby from the DSM from the architecture we're analyzing which components are linearly independent from each other and then we're giving this information again as code to the to the pipeline the pipeline is then delegating the building of independent components to it build agents which systems such as Jenkins and electric commander to build infrastructure CI tools such as these then delegated at the building on these agents automatically and then we can collect the individually booked components on the build agents together to solve the bigger problem and reduce the bill type and we're finding that as the code complexity does indeed increase if you have a million unit test cases for example to test a million pass it doesn't make sense to rerun those tests with every code change and so what we've done over fur is implement something called change based testing whereby we're analyzing again in the pipeline which also very shortly the changes that are committed in the repository working out the transitive closure of the change on that on the entire system and then retesting only those parts which are affected by the change and you of course can do that with with any part of Latics you can mark any any component has changed you can expand members to examine right down to the member level which which which components are in the system and then for any given function in the system if we mark that has changed it was compute the complete effect of that change on the entire system so this is another source of data that we're giving to the pipeline further I've alluded to the fact that we can help with integration testing unit testing and code coverage we have automated scripting capabilities to pass this data to other tools such as testing tools such as cantata which operated the unit test and the integration level and further we can also load up test data from the test runs to see where we have sufficient coverage where we have insufficient coverage where we have test passes and test failures so with that in mind let me show you some of those the implementation of this inside inside the the pipeline so firstly let me give you the standard view of isolated and that the project we've just looked at in Latics what you're seeing here is our implementation of the pipeline in Jenkins and as I say we've provisioned everything as code so this whole infrastructure behind this Jenkins doc demo of F at i/o infrastructure is it's based on kubernetes kubernetes cluster to manage those build agents that I talked about and Jenkins is the master that's the this orchestrating the the DevOps environment and we're also using docker so all of the tools that you see all the stages that I'm going to show in the pipeline are implemented in separate docker containers now the benefit of that is that we we have this scalability we have the the self-healing abilities if any part of the system goes down the agents can be 5 up automat and that's the orchestrator controlled from from mr. kubernetes so here's an example of the kubernetes code that we've written so we've provisioned all of this as code and you can see that it's script is codes yellow file that we then explain the the worker constructions we have explained the tooling constructions and the doctor instances are fired off from this kubernetes pod a yellow file the actual pipe line if I click on the pipe line here and show you these different stages what you're seeing here is start to finish a software delivery pipeline so if you're working in a an environment where software quality matters then you need to take your code through various stages you need to build the code obviously and let me fire a question to you trust your bill if you're having build issues for example one minute your build is reliable the next minute you do a debug build and it fails or you maybe need to rerun the build command twice or three times before it actually delivers the executables that you're trying to build if you have a situation where your build is maybe slightly untrustworthy then this is the answer this is one of the ways that we can help you is to abstract the build and create a reliable build infrastructure the worst every time also as a saves you're struggling with long builds then the this architectural driven approach to to do the smart build will definitely help you to build those components much faster and if we can if we can increase the speed of the build we decrease our release cycle rather the time it takes to release our software so let me find another question to you what is the cost of an old release to you so another releases if you change one line of code how long at the moment does it take you to issue your version what kind of process do you need to take your modified one line of code through before you just say that it's okay now in inverter terms we talked about isolate verify merge we talked about making a code change isolating it away from the main line and then verifying this locally verify the changes locally before committing to the main line so let me just show you a picture of that the reason we do that is is hopefully hopefully clear but in a traditional context where people are maybe committing code consistently to a mainline branch everybody's committed to the same place on that main line and just think Mike if my coach can spend a bug so then as soon as I've committed it to the traditional development branch then is visible to every other developer who's also writing and committed to that branch so that means if I consider this in medical terms I've contaminated or affected the main branch with with my bug and we all know you know in English a bug is an insect and insects grow and you know sometimes Bob's also have a life cycle right like an insect it grows and we need to decide whether we're going to eliminate this but now or defer it and often when we defer it it gets bigger and so the impact of the bug increases the earliest checked into the common code base and the longer it stays there so that's a traditional way of working but if we isolate through pipelines and give the developers the ability to test earlier and test often in isolation from the mainline then what they can do is effectively spellcheck their code they can check for bad dependencies so for example in the C world if source is talking to library that would be a good relationship a good architectural use but if I'm working in library and suddenly I create a dependency to source then that would be considered to be about dependency that I've created and violating the architecture but that could be detected at the build time with this system by giving the developers and empowering the developers to to test earlier and often with this pipeline so when we do that what happens is is the release cycle is much much much shorter this is our experience that are leading customer a lot of these ideas came from our work with firstly Siemens Corp of Technology in China as a research project that we undertook and second exactly then with seen as Halfin is in the u.s. where we develop some of these ideas precisely to decrease the the lifecycle of a build and to be able to release faster and so our innovations can increase and we can we can build and release much much more quickly so basically if you consider this infinite loop we're tightening this loop and making it as tight as possible between code tests build release and deploy so a visualization is that pipeline and let me go back to the light view as far as here we would typically perform after the build which is hopefully now optimal it's as fast as possible because we've done smart build using the Latics data we then pass to the static analysis tools that we're using and that finds defects such as memory leaks nor pointer dereferences buffer overflows all of the the things that you normally perform at static code analysis and then adjacent to that we would then start the architectural analysis which can be considered a form of static analysis as well by implementing these rules and dependables and finding violations against those rules so we tended to put Mattox in the middle of this pipeline because it speaks both to the left and to the right of these use cases that is to say that the data that the architectural analysis provides can help with the build through the module and assist modularization and assist building faster components and also as I said from the scripting capability we can serve data to your unit testing tool and integration testing tool so from the architecture for example one thing we did at our largest customer is automatically derived the partitions for integration tests so many companies that we work with struggle with integration testing and the reason is there's an infinite number of combinations of more Jools that can work together but the architecture and the DSM clearly show which components naturally belong together the partitioning inside Latics does that so for example you see the files here in the can component you see exactly which elements that are being used by kin and so we can automatically construct for example integration tests using cantata into the katate framework directly from scripting from the architecture and when we did this we found that the the integration testing phase was more deterministic this was one of our requirements of a DevOps pipeline but not only that it was also shortened by three months on a real embedded project that we applied this pipe this methodology - and that shortening of three months was done because the main use cases could be clearly identified from the architecture so we run the integration test for that we changed we can then run an open-source scan we have the technology now to analyze the source code and to for example find which open source components your being that you're using in your application maybe you're like me as a developer and someone asks you to do something the first thing we go to is get help and we try to find something similar so I was asked recently to write a stack I went to github and found some stat code two minutes later in my code base but nobody's checked that from a licensing compliance or security perspective and so many organizations now are aware of the risk that open-source providers again in the DSM we can model the dependencies to third parties and clearly see whether there are links to code that we're not expecting we can also check the api's clearly in the architecture we see the public API is going out from our software to the external world and how other components are using those API is right down to the member level so we also are able to use this data to look at for example attack services in our security policies and to to assist with making sure that all our api's are as they expect them to be we haven't got any and it's an expected data exposed data that should be private to certain components it's not being made visible across the trust boundaries and across the interfaces and so we can also use the data in that part of the pipeline so let me go back to the various to the various branches we as I say we've used kubernetes we've used docker and the all of the tools that we use in the pipeline are now inside docker containers and these stages are automated in this way and we're linking together through this investor service code and data driven approach we're passing data from the build to the static code analysis tools to the architecture and then right through the the entire pipeline so this data is freely available feed for you for you to have a look at just explain a little bit more about the backbone of the pipeline we've covered the reasons why we would want to do this so testing at t0 let me just go to this picture here which explains the backbone which as I say is kubernetes at Google in inside the Google cloud and then working running these docker containers so the advantage of docker is that we can abstract the the installations completely as in a few lines of code and you saw that in the ML code we're able to with a few lines of code specify the Latics should be deployed in a pipeline in a virtualized environment and then docker takes care of the abstraction so this in this case it sits on a Linux based cluster docker take care of the the main Linux kernel and then the doctor instance puts Latics on top of that Linux kernel and again the advantage of that is that the developers themselves no longer need to worry about settings for the tool they no need no need to worry about the downtime or maintaining the server or maintaining the the data that's associated with running these tools that is 10 things taken care of and also if the system goes down as I say it can self heal automatically by spinning up another instance of the doctor container so these are some of the advantages on where you would use where would you would use this kind of system so let's look at some of the of the results you can see I'm you just explained from from from this perspective once you've done all these stages we're connecting on the left-hand side to your source repositories that maybe gets it maybe TFS and maybe perforce it maybe CVS you're using some kind of source of configuration management system and I alluded to change based testing if you are if you're committing code to your repository which of course you're doing what we don't want to do is is basically validate those changes as quickly as possible so that if there is anything which has broken any of our walls that's broken any of our architectural rules or broken the build both the dependency violations if any aspect of that change is introduced a regression then we want to be able to validate that or reject it as quickly as possible so here you see the commit on the left hand side we would hook this up to your source repository and then we can intelligently deduce from the pipeline code that we have by examining the appended architecture we can compute the transitive closure every change of that commits made and then just rerun those tests which are relevant to those to those changes so we were aware that with some companies that were working with though they were blindly rerunning all the tests whenever a change was made and sometimes the testing phase was several hours so as I say that as the code complexity increases that's no longer a viable solution you need smart build and you need a smart approach to testing that's architectural driven so with this pipeline we have some heuristics and we have some data from a few customers that we've run this on the number of burden builds because we're doing isolate verify merge and giving the developers the ability to find these issues at time zero for one of our customers reduced by 65% so if you're struggling with this issue of work and builds this is provably demonstrated solution that would help you to combat that build problem building with with docker and these containerized tooling is definitely the way to go in terms of reducing infrastructure and maintenance of your tooling so if you have a suite of tools that you're using to to show compliance and to show testing shows static analysis results then abstracting that into and virtualizing into docker would be an efficiency improvement for you but this is the way we do devops it's devops with we're putting the capital q back into quality it's a quality driven devops pipeline that we're offering here where we're not just focused on the build but we're delivering a whole software delivery pipeline that automates as many of these stages as possible so the schematic overview is shown in this picture we go from code to build to QA the testing and release and traditionally QA has performed this function here static code analysis architecture unit testing and open source analysis what we are doing is linking this up and DevOps of courses is the the breaking down of development with operations it's the unifying of teams it's the creation of cross-functional teams where your architect would work with your developers who would work with the testers in order to achieve the desired outcome and the release of faster software and then T's do a static analysis and unit testing inside the developers of IDE s but running this pipeline locally so that's the dusty overview this is the infrastructure that lies behind all of this and if I scroll back to some of the visualizations that we have we have the ability to of course when you're doing everything in codes you can send the data to to our servers so for example this is lactic web running on the on top of this kubernetes cluster on top of and inside the Google cloud here we have published the the DSM in this case for ISO AG live in that its web and we can interact with this in a similar way to the way the developer would or the architect would interact with Latics or the desktop and this shows I just moved this okay you can click on so it just sort of must have helped you click on any dependency and see the use the usage of that and interact with the model in this way also see the cycles are contained in this project so this is a form of technical debt we have all the data served and because we're continuous we're also able to examine the trend of the architecture as we as we go forward so have we got more stability in this build compared to last build what's our cyclicality looking like what's our complexity looking like and we can make sure that we're trending in the right direction in a continuous way now you can do all that with sonar tube as well so we can publish all the results from the static analysis from dynamic testing from architecture analysis directly into sonar to where you get a holistic view both of the technical debt and of the remediation effort that you need to perform to achieve the quality goals one thing that we had heard recently from a customer that we worked with to deploy this pipeline was that people stopped talking about technical debt that was on the project and and obviously technical debt is quite a negative term like like any debt like financial debt it needs to be repaid everybody understands that we can't keep deferring problems post-release and so people understand that like financial debt it needs to be paid back and also there's a cost of paying back increases the longer the debt is outstanding and it's exactly the same with the technical debt and that's especially true of our potential debt because if we incur dependency violations or we introduce technicality we introduce dependencies that break our intended architecture that create a maintenance nightmare and spaghetti code so we we have found and this is a wonderful quote from one of our customers is that the whole discussion moved away from the terrible cost of having to pay technical debt and also the cost to move out when we get projects get into these situations to the creation of technical wealth and so what we can do using this approach is basically create reusable libraries our software assets become our asset base that we can now talk about software and technical wealth and the creation of technical wealth by creation of reusable software assets such as libraries that are well defined they have great dependencies that have great api's and secure api's that can be reused time and time and time and time again to great economic value for your projects and for your companies so many companies that we're working with have found that this is especially valuable for platform development so our biggest customer is actually using this entire system to develop that platform that's going into many many different medical devices and again you know the cost of a defect is extremely high because if a defect comes in to platform codes and a platform code gets propagated in too many different devices then you're looking at an exponential cost of a defect and so with this system we're able to validate changes in the platform well before deployment and to do preflight there's pre-fight tests in the pipeline so yeah there's a very positive quote that we got as you're helping us to create technical wealth rather than in the past I focus being on technical debt so just to finish off with some some additional screenshots from a real project that we were working on we found when we deployed the pipeline Co coverage increased because the components were easier to test and so here's a visualization of some source files that were tested the automated test case generation was a lot faster because the dependencies were well-defined and well managed code coverage increased and we were able to drive coverage metrics much much higher on this project than than had ever increased before and we were able to measure that and put that as a quality gate in in the pipeline and to defend the entire information so this is a just to kind of bring our discussion to a closure just to remind everyone why we're doing demos pipelines we want scalability so that as complexity increases the number of agents can scale seamlessly we can cope with increased loads going forward on our build agents on our build server we can accommodate the increasing demands of the customers as they require many more builds and maybe multiple build configurations so all of that complexity can be managed in encode we want to automate the analysis of the software the earlier detection of bugs at time 0 TX where x equals 0 we want to automate the architectural compliance at t0 and enable architectural compliance inside developer desktops IDs such as eclipse VI Emacs so that we can detect all violations much earlier and we water to feed data to the left and to the right of the pipeline to improve our build time so reduce the cost of the null release so up issuing our software after any change and to measure that and once we've optimized each stage of the of the pipeline then of course we solve a bigger problem of the entire stage and how long where that takes to run and to release our software so just bringing this to a conclusion the infrastructure that we have is reusable a lot of it is open-source but it's being industrialised so it works in industry i hi DevOps what is commonly called AI DevOps or industry DevOps 4.0 that means it's hardened and robust enough to be able to use in production environments it's the backbone for a production environment that delivers real software assets on critical projects and kind of commend it on that basis so from a certification perspective I know there's many companies on this webinar that deal with functional safety and standards such as deal on cents a B and ISO 26262 or the sixty three or four standards of medical this process has been through through audit and it's certifiable in that sense it's actually solves many of the the compliance problems that software development to these standards has because of the automation that we have for architecture for analysis and for the build so with it that's what it's easy to to produce repeatable builds and to shorten that delivery cycle and to get the software ships faster than ever before which is obviously our our goal of software engineers alcohol as DevOps engineers and it's ultimately the goal of our software organizations to be able to deliver this software as quickly as possible so with that I will bring this short demonstration to a close of course if there are any questions that would be a great time to ask those and thank you for your attention and for your interest yeah thanks Neil and it looks like we do have a couple questions in the queue so let me just just start from the top and I'll paraphrase these but so the first question is what is a good place to start with introducing this sort of DevOps architecture concept into my company so a great place to start is we we as very fair offer workshops and guided evaluations to take people through the steps of DevOps to to basically start with an analysis of where you are as a company at the moment so maybe some of these elements are part of your system already maybe you have some of the tooling that we've referenced maybe you have some infrastructure or something like Jenkins we want to start where where you are and then we would perform typically a gap analysis and discuss with you what was missing we can show you the optimal so now that we see other whether we've developed as we've shown today and then define a vision towards that and what we tend to do is DevOps is a journey it's it's a it's a cultural change sometimes for organizations it's it's and it's it's it's a journey that can take a long time so for some of our projects we are three years into a one-year project in one case and that's simply because we start automating and then people look and say this is great what else can we automate and we saw a different task into the pipeline and so with all of these pipelines because it's code you can you can code them in any way you like so for example we just started to automate functional tests and smoke test for one of our customers which is towards the right-hand side the pipeline so to answer the question where to begin usually a some kind of an audit we would come in and we would have a look at them where you have at the moment and then do the gap analysis and then discuss with you and see whether you want to move towards this kind of vision also a fun question is where's the current pain in the software at the moment what's the bottleneck that you're experiencing is it with the build is it with the test is it with your retesting efforts one customer we're working with House version proliferation for example they they have multiple versions of the same library all over the system and so this is causing and knowingly a lot of pain and a lot and lot of issues so we were able to capture that for that into our artifactory based system with Jake walk so one of the things we have here is proper artifact management as well where every dependencies captured and served from artifact areas code so I hope that answers the question and feel free to type questions in as we go along here so I'll just take the next question what what groups are I guess what do I need to do to get buy-in from up from to get something like that started which groups to be involved so DevOps is about breaking down silos and breaking down barriers between development and ops we've actually found that it breaks down it is implemented properly it breaks there are many more barriers than that and it creates cross-functional teams in many cases where there's a fluidity of data and exchange information exchange between architects between developers between quality QA are coming in because they're seeing that they can also make many of their manually driven tests and put those into a pipeline so typically we would bring together as many stakeholders in the software as possible that's of course software management that the people in charge of the build the people in charge of the release of the software plus the QA the developers and testers we found that that all of those people DevOps touches those needs as Frank's slides right at the beginning show dev test design and and the the delivery and the build and the release so we will typically work with a small group of stakeholders with with those concerns and then bring those together to discuss how we can implement this pipeline and improve problems that are interdisciplinary in nature actually so the test team has certain concerns that the dev team has different concerns often they're two sides of the same coin and a reflection of the same problem that's been expressed by those different teams so one of the things I haven't said anything about is analytics so we have the ability for example to analyze the repository and see who is committing most tests who's committing most source codes in the repository and visualize that so from that kind of profile analysis that also helps strategy because we can see who the who has a test of mindset and who has a developer mindset and very often they're a different mindset so developers tend to be creative testers destructors I want to try and break code and prove that my colleague code has a bug and so that's the typical mindset that I have as a tester this kind of mindset is clearly visualized in the analytics and we can use that to construct these interdisciplinary cross-functional teams they affect and mater typically would invite all all stakeholders who are interested in the software development process to that to take part I guess the next question which dovetails into your in others discussion sort of making on build improvement when we've applied smart built to the number of customers who have this problem is increasing it seems we're talking more about the bills than ever before and it's not just build speed it's build reliability and so people are encountering these kind of problems where they have to run the make command twice because you know this make system or the build system doesn't understand the component a needs to be built before component B well we solve that kind of problem and obviously with the virtualization of the environment we're building that consistent environment that's reliable so increasing build reliability so we certainly see a massive return on investment there because faster builds and we got to build at a time from 45 minutes down to 6 minutes in one more customer and that totally changed the release cycle because all the stages post builds were depending on a faster bills and so we were able to deliver massive our way on that particular project and certainly from the analytics perspective because we're connecting to the repository which has the commits it has the commit logs which we can also analyze who to see what the common failures are in those commit logs that like build failures compile failures we can adopt even a machine learning approach to those and find for example deviances away from the north so in one case we did a statistical analysis of the software and we found get set should be called good set state normally that was the baseline normal behavior but then those are aggression away from that behavior that we managed to determine to a kind of machine learning approach and then visualize that so there's so many ways that the system benefits project and produces our away builders the most common I would say at the moment that certainly to the right-hand side of the pipeline we're finding that the test phase which traditionally consumes fifty percent or even more of the entire software development process in especially in functional safety of environments that has been much much shorter using this approach because it's data-driven and people know the integration testing phase is a lot faster with the scripting capability that we've shown great um so the next question have you interviewed with PBS or posit Ori we do we do have an integration with PVCs the source code repository and that works out of the box also connecting on the left-hand side to Jenkins and then doing this stage staged approaches in the pipeline so it's a good integration it's probably not our most common integration but there are several customers using is talking with PVCs excellent okay next question can we access different builds from artifactory and generate Delta reports between those builds through lacks web I guess the build from J frog artifactory and generate of Delta reports between those builds through flax well I believe that functionality can be added and I'm wondering if Jacob is on the line to take that question out our resident expert on Jacob artifactory okay we'll come back to that I mean maybe to take naps for that question offline and we will check with our check on artifactory expert I believe from from the question that it can be de the the Delta so Jeff I artifactory is a fact management system it produces for example automatically the Bill of Materials the vom shows exactly what the constituent parts and then release are and you can do Delta's between the bill of materials between two different releases mapping that Dave's of inter Latics is certainly possible I would need to get back to you on how that works in practice and stick with us we'll think of a new edge our experts I'll take that question offline great more detail okay so the next question what is the mechanism to automatically select tests based on the impact of change determined in labs so change based testing is is one of the the key benefits of using this platform and so if I click back to the ISO AG lab what you can do if I run a one of our scripts for example to create a consult integration test that works by selecting modules in size so we can we can doing this two ways we can either say generate automatically tests for each of these partitions so that expressions the architecture ing to various algorithms those algorithms can include provider proximity or names alphabetical listing those various algorithms that group compose together provider proximity who want to make sense I think for testing because it basically groups things to where they're being used and where they're being defined and from these kind of partitions we can generate for example of cantata integration tests directly from that the other way would be to manually select in the DSM the modules that you want to put into a test and so you would kind of right-click those modules and then go to the script and then generator you can either generate unit tests for each individual you've selected ndsm or you could create an integration test and then what that does in this case it fills with with cantata it will the instrument all of the files for cooker bridge you can then apply Auto test to generate test cases automatically sorry I don't have time to show that but we can arrange a follow up with the person who's asked the question and then auto test will be applied for the l1 test and then you could do you could add your own requirements based test to the automatically generated test code in many cases we can generate 100% unit test coverage out of the box as we're giving developers a massive start on on testing and then coming back into the DSM you can load data from tools like cantata back into the DSM to see where you have got coverage and where you are missing coverage and further to that you would be able to then assess the impact of a change on the system so quickly that's the way you do that is your market has changed that they can create a tag and then you can create your basically do an impact of course on that tag and then that that would produce an impact report on the total transitive closure of that change on the system to show you what else you need to retest but you know I do that by hand because the pipeline can do that automatically so you know we can script that entire process for you and you know by identifying which components are the most important in the architecture we can we can do that by you know looking at the stability and the importance of the module and we have we have a script to do that as well to find any stable files in the architecture that might be a subject of a future webinar yeah right yeah yeah look at it sounds like that we can actually go further into that okay I think with that that's all the questions we had today thank you very much for everyone who attended and when will we did record this session so we will be sending out the recording after this but if you have any questions just let us know and or you don't want to get further delve into this any further just let us know but thank you

Show more