Pipeline integrity data for product quality

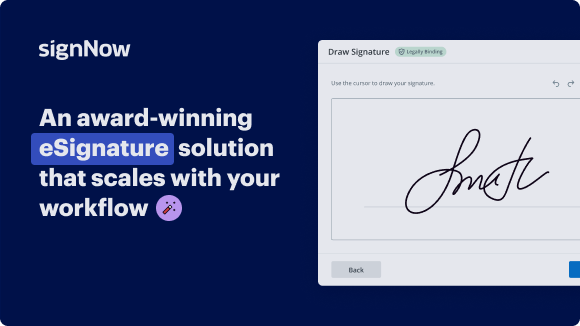

See airSlate SignNow eSignatures in action

Our user reviews speak for themselves

Why choose airSlate SignNow

-

Free 7-day trial. Choose the plan you need and try it risk-free.

-

Honest pricing for full-featured plans. airSlate SignNow offers subscription plans with no overages or hidden fees at renewal.

-

Enterprise-grade security. airSlate SignNow helps you comply with global security standards.

Pipeline integrity data for product quality

Pipeline integrity data for product quality

With airSlate SignNow, businesses can enjoy the benefits of a secure and efficient way to manage documents. From easy document sharing to customizable templates, airSlate SignNow offers a reliable solution for all your eSignature needs.

Experience the convenience of airSlate SignNow today and streamline your document workflow for better product quality.

airSlate SignNow features that users love

Get legally-binding signatures now!

FAQs online signature

-

How do transnet pipelines monitor the integrity of its pipeline network?

Transnet pipelines continually monitors the integrity of its pipeline network. Internal inspection tools, known as Intelligent Pigs, are valuable devices for this work. They make use of the magnetic stray flux principle to determine and record possible areas of metal loss from corrosion or any other cause.

-

How to ensure data integrity in data pipeline?

How to Enhance Data Quality in Your Data Pipeline Understanding data quality in the context of a data pipeline. ... Assessing your current data quality. ... Implementing data cleansing techniques. ... Data validation and verification strategies. ... Regular data quality audits. ... Leveraging automation for continuous data quality improvement.

-

How do you ensure data quality and integrity?

Here are some key steps to follow: Requirements Definition. Establish clear quality standards and criteria for high-quality data based on business needs and objectives. Assessment and Analysis. ... Data Validation. ... Data Cleansing and Assurance. ... Data Governance and Documentation. ... Control and Reporting.

-

What is the integrity of the pipelines?

Pipeline integrity (PI) is the degree to which pipelines and related components are free from defect or damage.

-

What is structural integrity of pipe?

Pipeline Structural Integrity Definition In other words, the pipeline must be designed in such a way that it is able to safely withstand all the external and internal loads, including its own weight and all dynamic forces. In addition, the quality of all parts must be inspected at the time of installation.

-

What is an integrity test for pipes?

Pipeline integrity testing refers to various processes, hydrostatic testing, used to test the structural integrity of a pipe. Hydrostatic testing is used to test certain pressure vessels, such as plumbing systems or pipelines. This test aims to examine the strength of a vessel, which is a pipeline in this context.

-

How to ensure data quality in data pipeline?

Example Data Pipeline: Uniqueness and deduplication checks: Identify and remove duplicate records. ... Validity checks: Validate values for domains, ranges, or allowable values. Data security: Ensure that sensitive data is properly encrypted and protected.

-

How reliable are pipelines?

Are pipelines safe? Yes, absolutely. Pipelines are the safest and most environmentally responsible method of transporting large volumes of petroleum products and natural gas over long distances.

Trusted e-signature solution — what our customers are saying

How to create outlook signature

foreign from here I'm given a sample of the data set in Snowflake and the key here is that this data didn't have to get moved out of snowflake we're just taking a sample that gives us more information about what the data looks like and what's the effort that we might need to go through to clean up the data set so as I select on the columns I can also get additional profile characteristics around the data sample the distribution and other statistics about the data set the suite also uses artificial intelligence to group The Columns into entities so in this case I see that I have a location entity and if I scroll down the data set I also see that I have a couple of columns that have been grouped around contact details now that I understand more about the data set I might have a question about where do I start how do I clean up this data well this is where the suite really makes it easy and being able to provide transformation suggestions to apply different data quality operators to clean up the data set in this case it's recommending a standardization operator to clean up the country field or I can use a verify and geocoding operator which allows me to verify the addresses but also geocode them as well in the transformation preview allows me to see what this operator will do before I execute the rule this gives me a window into the original data as it exists within snowflake but I can also see what this transformation will do if I apply the operator and so this allows me to see around the corner and look ahead and see what the addresses would be changed into but I can also see additional geocoding information over here to the right such as latitude and longitude information and also different geocoding levels the last thing that this transformation preview does is that it assigns these addresses to a precisely ID which is a unique key that I can apply additional data enrichment attributes to in my next step so this looks pretty good I'm going to apply this operator and now that operator is placed here at the top of my pipeline so now that my addresses have been verified and geocoded let's make sure that we can provide our data analytics team and our business users with some additional business context and this is what our data enrichment operator will do this is integrated in with the data Quality Service in the suite and using the precisely ID we can append different data enrichment attributes to this data set that ABC Global doesn't add today we can use different precisely attributes such as property and risk information to add to this data set which will give our users enhanced data context and richness so those are just a couple of ways on how we can apply operators to clean up the data without having to move it out of snowflake and once that pipeline is in a place where I'm comfortable with and I'm ready to go I can run this pipeline by deploying it back into the snowflake environment and as this pipeline gets run and deployed within snowflake that new data set will be cataloged in the Suites data catalog for my data consumers and business users to use for their use cases and their reporting purposes

Show more