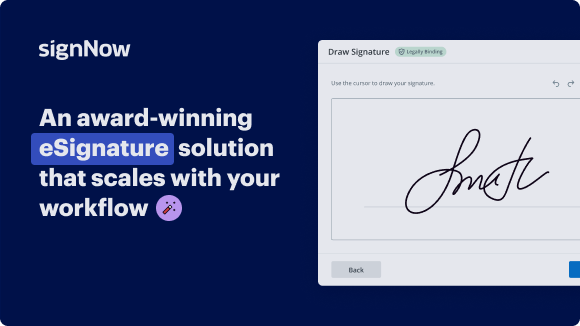

Streamline pipeline integrity data management for communications & media with airSlate SignNow

See airSlate SignNow eSignatures in action

Our user reviews speak for themselves

Why choose airSlate SignNow

-

Free 7-day trial. Choose the plan you need and try it risk-free.

-

Honest pricing for full-featured plans. airSlate SignNow offers subscription plans with no overages or hidden fees at renewal.

-

Enterprise-grade security. airSlate SignNow helps you comply with global security standards.

Pipeline Integrity Data Management for Communications & Media

Pipeline Integrity Data Management for Communications & Media

With airSlate SignNow, you can streamline your document workflow and increase efficiency. Say goodbye to the hassle of printing, signing, and scanning documents. Experience the benefits of secure and legally binding eSignatures with airSlate SignNow.

Sign up today and start managing your pipeline integrity data in the Communications & Media industry with ease.

airSlate SignNow features that users love

Get legally-binding signatures now!

FAQs online signature

-

What does a pipeline integrity engineer do?

Pipeline casings/ road crossing/ water crossing evaluation. Inspection plan development/ optimization. Identify pipeline preventative and mitigative measures, re-assessment interval and re-assessment methods. Monitoring and surveillance of integrity parameters to ensure reliable operations.

-

What is pipeline integrity management?

Pipeline Integrity Management (PIM) is the cradle-to-grave approach of understanding and operating pipelines in a safe, reliable manner. Pipeline Integrity Management (PIM) | Inspectioneering Inspectioneering https://inspectioneering.com › tag › pipeline+integrity+m... Inspectioneering https://inspectioneering.com › tag › pipeline+integrity+m...

-

What is transmission integrity management?

Transmission Integrity Mangement Program is a process for assessing and mitigating pipeline risks in an effort to reduce both the likelihood and consequences of incidents.

-

What is a pipeline integrity management program?

An integrity management program is a set of safety management, analytical, operations, and maintenance processes that are implemented in an integrated and rigorous manner to assure operators provide protection for High Consequence Areas (HCAs).

-

What are the issues with pipeline integrity?

Flaws in the pipeline can occur by improper processing of the metal or welding defects during its initial construction. The handling of the pipe during transportation may cause dents or buckling which compromise the pipeline. Major Threats to Pipeline Integrity - PICA Corp PICA Corp https://picacorp.com › major-threats-to-pipeline-integrity PICA Corp https://picacorp.com › major-threats-to-pipeline-integrity

-

What is the integrity of the pipelines?

Pipeline integrity (PI) is the degree to which pipelines and related components are free from defect or damage.

-

What is pipeline in data management?

What is a data pipeline? A data pipeline is a method in which raw data is ingested from various data sources, transformed and then ported to a data store, such as a data lake or data warehouse, for analysis. Before data flows into a data repository, it usually undergoes some data processing. What Is a Data Pipeline? - IBM IBM https://.ibm.com › topics › data-pipeline IBM https://.ibm.com › topics › data-pipeline

Trusted e-signature solution — what our customers are saying

How to create outlook signature

Most of us will agree that data integrity is a pretty hot topic. So lucky for us, we have head of strategy, Michael Leppitsch, to help demystify this one with Ascend Data Pipeline Automation as a backbone. Michael will show you how to organize and implement an actionable framework that delivers truly trustworthy data to the business while avoiding complexity and unnecessary costs. Hi Michael. Welcome. Hey, how are you guys? Hey, doing good. How are you doing? Good, good. Super excited. Glad to be here. All right. Take it away. Yeah, so for today's topic we're actually previewing a white paper that's gonna be coming out very shortly on data integrity. And we have a specific. Perspective on this, but just let's take a step back and talk about why data integrity actually matters. Well, it really matters because all business is becoming data driven, right? And so this data is increasingly interwoven across the business. And we are just seeing more and more sources of data, all kinds of sources, right? Transactional systems, databases, data lakes, APIs, even equipment, shipped products that are talking back to us supply chain partners, external sources, and all these sources of data are kind of operational systems, but the decisions for the business are actually made on analytics, right? On data that is assembled and, and put together specifically to answer certain questions like KPIs that drive dashboards for business units, feed reports, you know, business intelligence, people doing research and analytics on where the company should go next and what the markets are. And then more even increasingly we'll see more and more modeling, right? Machine learning, forecasting using ML and AI and so these endpoints of data where these decisions are made there's a kind of a difference between those space, a gap between these two systems. And we want the system on the right, this decision data to be just as trustworthy as the raw data in the source systems that are running our day-to-day business. You know, where a customer's a customer, and a shipping address is a shipping address. And invoices for invoices. That is, that is hard, trustworthy data that we're running the business with. We want to trust our analytics data and our decision data the same way. And so this looks pretty simple, right? But the, we know, we all know we're all here to talk about data pipelines. We know that the space in between those two the data moving from left to right here is actually. A bit of a black art, right? There's pipelines, there's APIs, there's file transfers, there's bespoke code there's platforms, there's lots and lots of tools, third party tools. And so really but this problem actually gets even more complex when you think about the data mesh, right? Within a company, what we just saw, actually, it's actually a microcosm that happens within each business unit, right? The sales team has sales systems and have decision data that's all happening within that business unit, but you have that pattern inside every one of these business units. So not only do you have this need and this demand for trustworthy data to make decisions within each of these business units. But you're also shipping this data around and all the business units are collaborating more and more and using each other's data to inform what's gonna happen next. And some of this, of course, has already been happening a long time. And integration has, for example, integration between sales and supply chain, has already been around for a long time. That integration has been at the domain data level where, you know, a sale order goes to the supply chain management. So for fulfillment, for example. What's new is that increasingly these business units are sharing decision data with each other. And so we just end up with kind of a hodgepodge of different data getting shipped around and, and it turns into quite a mess. So let's get back to the topic though, because: it needs to be done, number one. And the data needs to be trustworthy, right? So why don't we talk about data quality? Well, we are talking about data quality, but we're putting it a very specific lens on this. And the reason we're not taking a point of view on data quality is because it's a very crowded space, right? There's a lot of concepts, there's a lot of advice, there's a lot of tools, there's a lot of technology. But what we found when we've talked to our customers who are looking to use data quality or actually gets trustworthy data out of their pipelines running on Ascend what they needed is a simple framework to get to trustworthy data. So, we kind of anchored on data integrity as a way of talking about how to get trustworthy data so that business can make confident decisions. And the framework we teased out of all this knowledge and insights out there around data quality, actually became fairly simple. We found kind of a four stage process that lends itself to implementation, to deliver trusted data, to make confident decisions. And that's what we're gonna go through today here. So we're gonna go through this step by step. I will say that this is kind of an overview. So we're gonna take bites off of this. Flow from left to right and we're gonna start on the right. So let's talk about what does it mean for data to be valid and we don't need to make this up. There's lots of folks that have talked about what valid data actually means, and it's basically a category of validations and of checks that are very much at the kind of system, at the bits and bytes level of data. Right. For, for example, is the data encoded correctly? If you're gonna be pulling data out of a, out of a file system of invoices, say is the file that you're getting out of there actually inter readable? Is it encoded properly? What format is it in? Is it json, Parque csv, or is it a table? Right? Is it is a change detection system? Then we're getting into the field, the validity of the schema that's encoded in whatever that payload is. Do we have to write fields? Are the colonies correct? You know, do we have good data types that are gonna be recognizable downstream? And of course, always detecting the schema drift. Having a mechanism to detect when the source system schema changes and being able to intervene x when that happens. Is it correctly stored? Do we know where that data is gonna be located physically, right? Is it in the file system? Is it the table? Is it behind an api? So that as we're acquiring that data, are we going to the right place to get the right data? And then this data usually from transactional systems is updated all the time, right? So one thing that matters here, in terms of validation, is what is the correct cadence. By which we scan the source system or get the latest files. And there's all sorts of sources there and different kinds of, file drops, table updates, et cetera. But that needs to be part of validation for us to just get, get solid that this data is actually, you know, physically computable. We can do this with technology, right? So once the data is valid, the next phase is we actually add some things to validate data. We're gonna perform some additional operations, if you will, okay. To get the data to be accurate. And with accuracy, we're looking to get the data ready to be able to use for analytics, for joins, for merges, for filters, for all the business logic we're gonna perform on it. And we can do this with some cleaning operations. And so there's five categories here. One is just making sure the data's complete, right? Are all the fields and columns there that you're gonna need downstream? Do we have all the records? Looking for uniqueness, right? Redundant records are often a problem. If you're getting sales information from two different sales systems, is there a redundancy there? You might have some fairly complex rules that de duplicate those redundant records. You can't often make 'em go away because they're coming from two different systems, say, but you can have some basic rules and operations that actually perform that de-duplication. And then consistency. This is a very specific requirement, looking at the precision, for example of of numerical data. Or making sure that the data is only allowed to appear in terms of a set definition, and other kind of constraints on the data in any of the sources, in any of the sets data that you're getting. And you want to eliminate those kind of problems by filtering or replacement, right? Again, there's these operations you can perform here to get the data to be consistent. And then reconciling is the next level. Beyond that, it's actually reconciling different data sources against each other, right? If you're pulling a a sales record set and it has customer IDs in it, you might need to do a crosscheck to your master data, make sure that customer Id actually exists, right, before you included in a sales report. So there's some business rules that start to get into this part of the conversation. What does it mean to reconcile this data? So, so you can actually perform these analytics and, and generate data products out of it. And then finally scanning is actually, there's a lot of tools out there that actually do scanning. What we're talking about here is scanning the data to identify outliers, right? You might have some rules about "data is an outlier when...", you know, when it's beyond a third Sigma or when it's, you know, when, when it's just physically an impossible value. So again, there's filtering is a very common way of, of setting rules by which you're gonna filter the data based on scans. So the scanning is kind of a separate thing, very common to in, in a data quality world, but it's one part of what happens here to get the data to be accurate. All right, so let's say we've got accurate data. Now we've performed these operations, we're ready to actually perform business insight on it and we'll call this stage reliable, right? So here's a couple more things to actually process this data. For the business context, and here this is very business centric, right? You actually need to know what the context is and what the analytic is that you're gonna be delivering. So what we're gonna focus on here is a couple of patterns that you wanna apply as you're performing those operations. And the first one is shift left. You may have already run across this term. We see that in data quality increasingly. You wanna apply to operations, whether they're filtering or merging or joining you wanna perform those operations as early in that life cycle as possible, right? Because you're increasingly removing error from the data. And so the earlier you do that, the better. And that's what we've done with these four stages. By the way. We've basically shifted left as many of those operations as possible. To drive this data integrity delivery mechanism. And here the relevance of data is actually super important. You wanna make sure that you're only moving data through your pipelines or through this process of making the data trustworthy, if that data is gonna be relevant to the business, to the decision making process. You wanna make sure that all the needed data is present. And you also wanna make sure that the business logic that's implemented in this processing is actually implemented correctly, right? So this relevancy is pretty important. We'll see that pop up in another another way a little bit later. And so what happens? You can see that there's a often multi-step processing that happens in the data as it's performing these analytics, but that processing needs to be done without errors. So this is no longer source system compute. This is like data compute. And you want to be able to self-audit that this reliable data stays reliable, right? That you actually detect and audit correct for failures and for errors. That you notify engineering if there's actually a problem that you can't resolve, right? That if you detect a problem in the data that, that indicates that it's not reliable, notify engineering and then also make it super easy for engineering to intervene and fix the error, right? You don't wanna have to stop pipelines for weeks on end to fix something. You wanna make it super easy for engineers to intervene, fix it, and get it back up and running, because that contributes to the trustworthiness of the data, right? Let's not forget what this is all about. And then this final one this is the the point where you actually need business participation, right? And so we talk about data ownership or data stewardship. Here what we're talking about is actually having a business person participate and drive the definitions that actually, you know, that define what reliable data is. The first two stages are very technical, right? If you're, if you remember they're very engineering center data engineers or software developers can handle those. This is the part where you actually need a business person to participate in. Like, what does this mean? What does weather mean? What does bad data, you know, good data mean what's a large customer? What's a small customer? Those are all business conversations that are happening and are being embedded in this code. And they're the ones, so as they participate, they're actually the ones that sponsor the trust in the data, right? So we we're introducing this human notion of other people, business people, trusting another human who is involved in the process of defining what reliable is, right? And so this is a super important vector, for trust is to have this data steward in place. And you'll see this, this is something that's talked about all over data quality methodology. But this is where it fits here, right? In our methodology, it drops right into this defining the reliable data. Okay, cool. So we have useful, reliable data that's ready for business to use, make decisions. So what's missing? So this is the part where we're gonna do a little bit of auditing around the process, right? And this is kind of a catchall. But you wanna actually make sure that these systems are in place. Few of these are actually things in this step, in this trustworthy step are things that you're gonna do at the end of your process. These are things that are happening throughout the process. But if an audit is done, these are the things you want to check the boxes on, number one. But the data has been secure throughout this whole process, right? The company has control over the full data life cycle. Encryption transmission of data and access are all secure. The data's observable. All these processes are observable that any of the platforms that touch the data are monitored and track their operation and generate logs, right? Super important part of observable is that the lineage of the data is traced throughout this process. End to end. Right. So that you can go back and audit, you know, six weeks ago when we had a sales report, what was that data? And you wanna be able to go back and have a bulletproof lineage tracing and know end-to-end. What data was used? How was u how was it used? And if there was problems with it, be able to pinpoint exactly where that problem was so that going forward you can make that data trustworthy. And then also searchability and cataloging is also actually a part of this here. That is an important part of what your data steward is gonna help you do is to make sure that these data products at the end here are properly tagged. You know the people who find them are using business terminology to find them. Timeliness is super important. This gets back to shift left , making sure you're processing any of these processes as early as possible. Because if you have a problem, you want to know that as early as possible. You don't want to know that your data product is bad when a business starts making decisions with it. So you wanna detect it soon, as soon and as early as possible. And here's another one actually that's important for timeliness, which is whenever possible, you actually want to process data continuously, right? In small batches. So if you're looking at doing like a month end report on your sales process, maybe that's actually a daily process where you're taking each day's data and aggregating it. Then by the time you're running your month end report, all the data's there, right? You're only waiting for the last day. So this timeliness includes this incrementality and running these processes and batches. Then another thing about trustworthiness is, is actually it needs to be used by the business, right? You want to track and log what data is the business actually using and how are they using it? What kind of decisions are they making with it? And if there's data product lying around that nobody's using, you wanna detect that and deprecate it, right? It's costing your money, you're processing it, you're invested in it, but nobody's using it. You wanna get to the bottom of it and potentially deprecate it and take it out of the equation. So this business impact of data-driven decisions: it provide the feedback loop to make that data trustworthy, right? If your colleagues are already trusting that data to make decisions, and there's a data steward sitting behind it, and all these trustworthy check check boxes are checked, you're gonna increase the trustworthiness and the use the value of that data to the business. And then finally at the bottom, this is the part where, you know, data pipelines are messy. All these data processes are messy. So you want the systems that you're using to perform this, this data integrity, go driving these data integrity stages to self-monitor, to raise alerts and notifications and to do that smartly, right? Not just blogs that nobody reads but actually smart alerts that raises and involves the engineer to resolve the problems, right? Going back to the previous step. And then there's no backlog of unresolved problems that makes the data super trustworthy. You know, if I'm using a data product and there's no pending errors on it, there's no interventions needed, that raises the value of it and my ability to trust that data. Okay, so let's bring it together. Obviously we've been talking about data pipelines, I hope that that's landed, to drive this process to generate trustworthy data for the business to be able to make confident decisions. And now let's talk about what intelligent pipelines are and connect the dots back to why we're talking about that here at Ascend. First of all, intelligent data pipelines can drive data integrity. Because number one, there are sequential life cycle steps, right? These four stages. Each one of these stages probably has a lot of step, a lot of steps within it. But data pipelines are inherently sequential lifecycle steps, right? You can organize your operations in the pipeline and back it up with the automation to run those processes. Pipelines also do really well for shift left processing, right? In particular using the Ascend platform. A lot of the initial technical validation in the first stage and valid stage is actually built right into the platform, right? It checks for cadence, it checks for data types, it checks for schema, right? Those are all capabilities that are right in the platform. So it allows you to shift left a lot of the processing and drive it with the platform itself. And the thirdly is that intelligent pipelines, on Ascend in particular, do well when there's a platform that is automating these, these processes, these four stages. And let's talk about what some of these automation can provide and hopefully we're connecting the dots back now to the requirements at each of these steps, right? Number one, built in monitoring. The platform that self monitors, tries to self-correct, does reruns automatically, finds problems automatically, that checks the boxes for a lot of these steps and for a lot of these stage specific steps within each of these stages, that help you deliver trustworthy data. Built in alerting, right? Making sure that you you can connect to another alert alerting system, but the alert itself is generated within the platform, built in lineage, making sure that the system itself end-to-end has no scenes. From ingestion to the ETL through the transforms, through the final delivery of the data product. And you know, in a trusted data set, a trusted data product, you've got this lineage back to back, and you can go back and audit it at any time and you can see it actually live real time. You can trust that data because you can actually see this lineage upgrade. Built in filtering - so you can apply these different, we saw filters show up several times here in each of these four stages. So you wanna be able to apply filters anywhere in the process, right at ingestion, or at the very end, to apply these rules that make the data trustworthy. And then these built-in validations which sometimes take the form of fairly sophisticated business logic, right? Maybe you need to encode 'em in Python, maybe in sql. But these business-oriented validations are pretty important. And all of this needs to be driven by orchestration. . And so having that orchestration be actually be built in, right into the platform makes these pipelines more and more intelligent ,and makes this the case to use intelligent pipelines to deliver data integrity. Right? And at the end of the day being do, being able to do that efficiently using costing mechanisms that are built in a platform that's measuring how much things are cost, how much processing is being used to do these processes gives you an ROI . Because you got the cost side of this. But you can tie that back to the benefit I think generated by the business using these. Data using these data products, and now you can tie those out. We can actually do some, some cost analysis, whether those data products are worth the processing costs that it takes to make them trustworthy. And then some of the housekeeping in the very last stage, right, to make these trusted access controls are super important. You want that in your platform so that there's no back doors. And and lots more, right? We can go on and get more and more specific around Ascend functionality, and Ascend functionality running data, intelligent data pipelines to deliver trusted data to your business unit so that they can make confident decisions, right? And so the punchline here, of course, is that Ascend data pipeline automation is specifically capable and uniquely positioned to be able to drive this kind of process, and do it while meeting all these requirements that we just went through to get the data to be trustworthy. So that's it. This is pretty quick, but let's open it for q and a. Perfect. All right, let's see what we got here. So first question is: we use data quality tools that identify statistical outliers. How do you integrate these with this approach? Yeah, so you're talking about that step that I had it under scanning, Where you're scanning your data and you're looking for outliers. That is actually a pretty important part of data quality. Quality control is often a statistical process. And there's tools out there that do that, right? Ascend is not specialized in that. We're specialized on the pipeline piece. So what we've seen folks do is to actually invoke scanning tools on data sets in the pipeline that's being run on Ascend. And that's super easy to do. You can do that within a particular processing step. We call 'em transforms. You can actually perform a scanning operation. That scanning operation can produce data in its own right. Outliers, what are your barriers, where limits that you wanna set up. And then you can feed those kind of parameters into the filtering subsequently in the pipeline. So that's a really good way to integrate these scanning platforms and statistical analysis platforms and actually get that the feedback that they generate about your data, pump that right back into the process to generate a trustworthy data. So it's not just, you know, off to the side somewhere and somebody looks at it, you know, at the end of the week. This is something that can be tied in real time. Cool. So along the lines of trustworthy data, sometimes this question is related to knowing when data is actually the right stuff. So the question is, our IT teams are missing the intuition to know when data coming in just doesn't quite look right. So how can we teach them the nuances of what trustworthy data looks like? Yeah, this one, this one's super interesting. I, this came from a conversation that we had with one of our customers who actually started their data team with business analysts. Like people who know the data and they know what customer looks like, they know what sales looks like, they know what a click stream looks like down to the bits, not to down to bits and bytes, but certainly down to the field level. And so having those folks participate in this entire process, I know we talked about the data steward, right? The data steward is actually a key part, a key element to answering this question. But you can actually have data analysts be part of the data team, be part of the IT team. Whose job it is, you know, they often house the data engineers or the actual, you know delivery functions for data pipelines. So augmenting those teams with and empowering these business folks is the best way to do that. We have, you know, customers that specifically started data teams this way, so there's proof and that's working really well for them. And then the best practice around the data steward, you know, adds on top of that. So, so you wanna do business involved pretty much throughout the whole process here. Cool. All right. Last question. Our engineering teams build a separate pipeline for each report to avoid cascading errors. How do we get the result of different pipelines to stop conflicting with each other? Yeah, this, that's a good one, right? That often happens when each pipeline is launched as its own project and the IT team goes and delivers. They go to the source system, get the, get the data, they build the pipeline, and then deliver the, the data product, whatever form that takes. And they don't talk to each other. Right? So, that is a very common scenario. I'm sure a lot of folks joining us here today are familiar with that. When you Introduce some automation to deliver trustworthy data through these pipeline mechanisms, and you have a strategy kind of end to end, where do you add that value? Where do you kind of uplift your data from? From validated to reliable to trustworthy, right? Then those are good seams where you can start to reuse data. So weather data, for example, that has gone through the validation process. The bits and bytes are right, it's in the right file system, you know, et cetera. You know where to get that data. And at that point you can start using that data down multiple pipelines or you can go one step further. You can go past stage two where you know, like your, your fields are right, your columns are correct, your data types are, are good, right? You've got, you've got the a lot of kind of data level validation set. You don't need to do that twice in two different places, right? You can do that once and from then use Like the business logic, and this is actually where the real differentiation happens between different requests for different data, right? One person wants to use the data to forecast supply chain logistics, you know, how many trucks should be staged, where, for example, and another one was to project sales with it, you know, depending on data. So you can, you can take that data as late as possible. Down these stages and only fork your pipelines for the business part at the last, at, you know, at the very end. And that's gonna remove a lot of the sources of error between pet pipelines that have otherwise been doing this in parallel and doing this over and over. There's, by the way, there's another huge benefit for this. And that's cost reduction, right? Some of these operations for example, scanning data that's pretty expensive. Or reformatting data, et cetera. There's some of these operations can be pretty heavy. So you want to just do that once you wanna incur that cost just once. I would just add to that, that your data stewards become part of the conversation, right? So your data steward for the sales data forecast, Might be a different data steward from the logistics and structure staging forecast, say, right? And so they need to agree that this shared weather data, which is kind of a data product in its own right. Great. And we talk about that elsewhere. Actually fact, I'm gonna talk about that tomorrow in the session. That interim data product, this weather data is something that they need to agree on. They both need to say, yeah, this is the weather data I'm gonna use. Let's use it for, for both of our forecasts. And that now you've, now you have business behind, right? It's not just IT or engineers, it's actually the business agreeing. But that is their reliable data. That's a trustworthy data to use going forward for these forecasts. And that will also help close the gaps that are otherwise kind of really hard to find. You know, if, if you're getting data pipelines saying different things to different people, that's, that's really hard to get to the bottom of. Great. So I think that wraps up all of our questions. Thank you so much, Michael. This was really great. Look forward to seeing, you said you were talking again tomorrow on another session. Yep. I'm gonna talk about data products tomorrow with Alex. Looking forward to that conversation. And then we'll have a paper coming out on data integrity shortly. So keep your eyes peeled for that.

Show more