Empower your life sciences business with pipeline management for Life Sciences

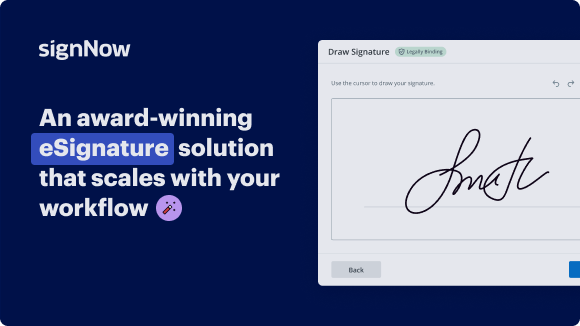

See airSlate SignNow eSignatures in action

Our user reviews speak for themselves

Why choose airSlate SignNow

-

Free 7-day trial. Choose the plan you need and try it risk-free.

-

Honest pricing for full-featured plans. airSlate SignNow offers subscription plans with no overages or hidden fees at renewal.

-

Enterprise-grade security. airSlate SignNow helps you comply with global security standards.

Pipeline management for Life Sciences

Pipeline management for Life Sciences

With airSlate SignNow, you can easily manage your pipeline for Life Sciences by following these simple steps. Experience the convenience and efficiency of airSlate SignNow for all your document signing needs.

Streamline your pipeline management for Life Sciences with airSlate SignNow today!

airSlate SignNow features that users love

Get legally-binding signatures now!

FAQs online signature

-

What is the biotech trend in 2024?

In 2024, bioprinting and tissue engineering are expected to be significant trends in the bioengineering industry. These technologies are evolving rapidly, offering promising prospects for medical applications. What trends will shape the biotech industry in 2024? - Labiotech Labiotech https://.labiotech.eu › in-depth › biotech-trends-2024 Labiotech https://.labiotech.eu › in-depth › biotech-trends-2024

-

What is pipeline analysis in pharma?

A drug pipeline is the set of drug candidates that an individual pharmaceutical company or the entire pharmaceutical industry collectively has under discovery or development at any given point in time.

-

What is the life sciences industry outlook for 2024?

Life sciences in 2024 is expected to see cautious growth driven by strategic acquisitions and collaborations. While economic headwinds exist, particularly in China, overall M&A activity is predicted to rise, with pharma companies focusing on assets with high commercial potential.

-

What is the life science market forecast?

The global life science analytics market in terms of revenue was estimated to be worth $27.1 billion in 2022 and is poised to reach $47.5 billion by 2027, growing at a CAGR of 11.8% from 2022 to 2027. Life Science Analytics Market Size, Share, Trends and Revenue ... MarketsandMarkets https://.marketsandmarkets.com › Market-Reports MarketsandMarkets https://.marketsandmarkets.com › Market-Reports

-

What is portfolio strategy in pharma?

Pharma portfolio management refers to the strategic process of selecting, prioritizing, and optimizing a company's portfolio of drug candidates and products to maximize returns and minimize risks.

-

What is the pipeline in biotech?

Biotech focuses on new drug development and clinical research to treat diseases and health conditions. The “pipeline” refers to the stages biotech companies must pass through to move on to the next one.

-

What could be the future of life sciences?

These are exciting times in the life sciences industry, thanks largely to digital acceleration. Advances in AI, machine learning, and genomics are leading to significant progress in drug discovery and personalized medicine. Plus, digital health technologies like wearables and telemedicine are transforming healthcare. What Will the Life Sciences Industry Look Like in 2030? - Netguru Netguru https://.netguru.com › blog › life-sciences-industry-... Netguru https://.netguru.com › blog › life-sciences-industry-...

-

What is the outlook for healthcare sector in 2024?

More than 60 percent of our survey respondents expect deal volume to rise in 2024. Health systems will pursue partnerships, especially with digital health companies and physicians, to grow share, build new revenue streams, and gain economies of scale. 2024 Healthcare and Life Sciences Investment Outlook KPMG https://kpmg.com › articles › healthcare-life-sciences-in... KPMG https://kpmg.com › articles › healthcare-life-sciences-in...

Trusted e-signature solution — what our customers are saying

How to create outlook signature

so today i am going to be sharing a few thoughts about evaluating and choosing a data management system for life science research um and i'm going to be sharing thoughts and as steve indicated hopefully we'll have some discussion around as well so please uh put that put those questions into the uh into the chat or into the q a and uh we'll address those as we go along probably every few slides or so i'll just kind of take a pause and ask steve if we have any questions all right uh so i'm going to start with a couple of premises um first one your data is really valuable so hopefully this is not new to you hopefully you know this um but let's dig into this in a little more detail um if i ask you the question you know as as an organizat as a research organization or as a research group what is your most valuable asset what would you say now you might say it's my people and if you said that then i'm totally with you that's what i would say about my organization about lab key but what's second on the list um hopefully your data is right up there um because you spend a lot of time and a lot of resources and a lot of effort collecting data the instruments that you purchased and all the service contracts and support and all the you know the time and effort put into collecting data from those instruments all the consumables that must be uh the data must be more valuable than what you're putting in to collect that data otherwise why would you be doing it you know likewise if you're conducting clinical trials or observational studies those are of course very expensive and ultimately the goal is that data you want to get that data and so i'd at least argue that that data is is one of your most valuable assets as an organization and as a valuable asset you should secure it you should protect it you should manage it analyze it you need to be able to share that data you need you need to be able to do those things in an efficient and accurate and appropriate manner and at least i would argue you need a true data management system a robust data management system to handle that data to handle that asset that is that is important in the business that that we're in so my second premise um kind of a cliche i know but um we operate in an ever-changing world um so example number one pretty easy anyone going through a global pandemic right now or have been over the last year or so hopefully we're easing out of it um but that's just an example of one of the changes that you probably didn't predict at least not with this level of specificity um so and and kind of think back over the last year or so and how your existing data management system handled that shift handled that change that pretty dramatic change you know on the on the practical level were your remote was your remote staff able to access your data management system uh seamlessly was your it team able to uh manage and sustain that system you know is everything all the data that you collect and all the data that you need on a daily basis was that available to everyone wherever they were um that's sort of the pragmatic aspect and then sort of on a more um you know a higher level um how did you as as an organization as a team as a group um handle the shifts in terms of funding and potentially new research that you were asked to do we at the lab key at least we know of a lot of groups that had to shift their direction they were asked to invest in covid research when they were previously working on other um you know other immune related or infectious disease type work or maybe not even infectious disease work they were asked to shift and pivot and were they able to do that were their data systems able to uh make that shift with that um that's that's sort of an important example of why you need a fairly flexible data management system so that's one example that you know nobody really predicted um at least again not not the specifics of that that particular pandemic um but there are other shifts and changes that you can predict um you know i can tell you if you're here in this webinar it's quite likely that your lab or your group or your team or your division or company is going to grow and expand and with your team your internal and external collaborators are going to grow as well they're going to shift you know they're going to be some changes people will come and go um but as a you know growing research organization more people are going to need access to the data that you collect and likewise your your research is going to shift in new directions um so new instruments and assays and therapeutic areas you know in general the diversity of data is going to increase as you branch out and and explore new areas and and do new science and as we've seen data volumes are going to grow and in some cases they grow exponentially um so case in point with um you know lab key exists today because of a data scalability problem so fred hutch cancer research center brought in uh myself and a couple of others the co-founders of lab key to solve this data scalability problem with mass spectrometry based proteomics um so new there there were existing systems um cgi scripts with xslt that sent all the data the you know refined and filtered data directly to a browser and that worked fairly well for 2500 rows of data 5 000 rows of data but then new instruments came out the the ltqs back back in the day 17 18 years ago and those instruments were producing 250 000 rows per run so an order of magnitude 50 times 100 times the amount of data that was there before and the existing system broke down and that's fundamentally why we created lab key to solve that problem to create a system that could scale much better than than the existing system now we've seen this exponential growth in other areas uh rna sequencing dna sequencing flow cytometry um you know a lot of these different instruments you know there are there's more data there's you know more uh you know more information more lasers more you know larger larger wells higher resolution images all of those factors going into uh more and more data being produced by by these instruments and and by your your groups now all these things that i've talked about your your group expanding and funding expanding and your impact growing and your research shifting into new areas and more papers and you know just in general more impact those are all good things right more data is is good if data is valuable then more data is more valuable but with these changes uh come some other things like institutional scrutiny so your institution or in some cases regulators are going to become much more interested if they're not already in the data you're collecting how you're collecting that data do you have the controls and the processes and policies in place for compliance and security and privacy and tracking provenance of the data that you're collecting um and also you know we're seeing you know we've seen over the years uh from funders uh an increased demand for the ability to share that data and share the data in meaningful ways and personally i i think that's just starting i think the the funders are going to become more and more demanding in terms of what form data sharing takes and how making sure that that data is useful to others so that your science can be reproduced by others and and duplicated and in general society can benefit from it so my my takeaway from this slide really is that you know you need to think about the future um how is you know and you can't predict everything but you can predict some things you know you know the data volumes are going to increase you know that you know the number of users and are going to increase you need to take those into account when you're evaluating data management systems so you don't lock yourself into a system that's that's not going to grow with you so this is kind of the in some ways the key slide you know if there's one slide you remember it make it this one we'll talk about each of these points in detail but um you know the general takeaway is choose a system evaluate systems and and ultimately choose a system based on scalability and flexibility that will help you with those future needs that will make sure that as things shift your system can can shift with it so you want to anticipate as much as you can these future needs can't predict everything but scalability and flexibility is key so i've outlined six different areas or six different axes that i think are useful for evaluating scalability and flexibility of these systems um so first robust security and compliance controls um i put that first because if you miss the mark on this one it's game over right you just you can't a system that doesn't meet uh the security requirements and compliant requirements of your institution or of you know the regulators in your um in your area so that's kind of job one now as we talked about large volumes of diverse data that's what most research organizations are dealing with and they're going to get larger and more diverse so make sure the system can handle a large variety of data um as flexible as possible in that in the data collection area and that it can handle it in in large volume and that really means in order to collect large volumes of data efficiently you need automation you need high throughput data acquisition and that really should be you know the system itself should do that work so you shouldn't have to write programs in order to get data into the system in a high-throughput manner you know if i have to write the system to load up data well why did i buy a system you know that that system should be able to collect that data automatically for me and provide provide the help uh that i need to collect it now once that data is collected the system really needs to be able to integrate that data and what i mean by that is not just put it all in a big soup or throw it all into a file system i mean provide the the capability to join that data by common keys and to be able to do the analysis that you need to do quickly and easily and efficiently and accurately and that means adding metadata to the system as well because if you just have a bunch of data and the system doesn't understand anything about the data that analysis is going to be very much more difficult very difficult um and so layering in metadata is is key um along with the data and then once the data is collected and integrated and perhaps transformed in a variety of different ways the system needs to be able to provide access to that data through the tools that your that you and your team and your users want to use so there shouldn't be limitations as far as you can only use system a to analyze the data out of my data management system or you can only use you know perl or you can only use you know one specific language or one specific tool the data management system should interact with all of the tools that your constituents want to use and then the last piece is really about the vendor the producer of this system you want to make sure this system is actively developed it's enhanced on a regular basis it's not static um and that you have support you know this is not just you know a shrink wrap package that you pull off the shelf and you and you're on your own you need to have a partner a vendor that supports your work and invests with you so those are the six i'm going to take a pause i guess at this point and see if there are any any questions yet uh no questions yet but don't be shy folks yeah if you uh if you think i'm off base if you think i've forgotten the key principle or or you don't you don't buy into all these please please speak up all right so we'll go into these uh in a little more detail um starting with again number one security and compliance um so what does it mean to be scalable in the in the realm of security and compliance what are what are some of the keys here um well there are of course hundreds of controls that i could list as far as security and compliance is concerned um but some of the keys you know we really want to make sure these systems integrate with the institutional systems that are out there institutional systems for authentication and for authorization so these would typically be things like ldap and saml and perhaps cass for institutional authentication or maybe two factor authentication is is in place duo or a similar type of system and also integrating with authorization systems again ldap is is commonly used for security groups for instance and so you want to make sure the system can integrate with what's in your institution otherwise it's not going to make its way into the institution the it team will most likely not allow that kind of system if it doesn't fit in with existing authentication and authorization as we talked about the number of users is could be large and will scale up to hundreds or maybe even thousands of users so your system should be able to handle that and should be able to partition them based on named security groups so that as an administ your administrators can um you know can handle this you need the ability to organize your data and to partition your data however you see fit maybe you organize your data by uh funding you know by funding organization maybe by lab by study by experiment by therapeutic area you know it could be anywhere you should have the freedom to organize your data and partition your data so that only the appropriate groups are able to see data in each of those partitions so you should be able to control access and importantly you should be able to report on these decisions so that you can audit so you can verify so that when things change over time you can you know you're confident that you've made the right the right choices as far as access control and then of course uh you need a system that supports the appropriate compliance controls that um that you're under um so you know a few cases in the u.s at least hipaa fisma cfr part 11 does the system support robust first of all the the security controls that are inherent in these um in these in these uh regulations um does it also support uh robust audit trails and electronic signatures and you know user um user management features that are all required um so make sure that you know what um regular regulatory compliance you need to adhere to and the the controls under which um uh that are required by those uh by those regulations large volumes of data um so and not just any kind of data but diverse data so this means a couple of things this means bringing together data of a variety of different forms [Music] and integrating it together so as an example you know if you're conducting a clinical trial for instance you most likely have sample information instrument data clinical data demographic data and perhaps other different types of data you need to bring all of that data into one system so that you can analyze it in um you know in meaningful ways efficiently and accurately so in general you know you should be looking for data tables that can have hundreds of columns and potentially millions of rows you know in some cases like back in the the proteomics days we had tables with billions of rows at times so systems should be able to handle those uh those volumes of data and importantly the data types that you use and you interact with they need to be user definable you need to be able to customize them and define them because you know going back to the idea that the kind of data you're collecting is is going to be changing quite frequently um so you don't want a system where the vendor says you can pay us and we will you know you we will create the data types for you um you need to be able to do that yourself you know the system should be able to conform to the data you collect and then as you collect all this data you know i've said here i've described it as consistent in an interaction model and what i mean by that is you and your team and the you your constituents the users of your system they should be able to learn the system fairly quickly and whether you're looking at sample information or assay data or clinical data or you know multiple different types of assays the user interface and the models that that you use to analyze that data and view that data and filter sort do ad hoc analysis and formal analysis and create visualizations that should be the same across all these different data types so as we talked about um large volumes of data necessitate automation automated data acquisition so that's really key for scalable efficient workflows as you scale up the amount of data and that you're collecting but with that kind of immediately comes the need for quality control uh as i'm sure you know data files are messy they're not always perfect um even data files coming off of instruments at times are are not clean so the automation that gets put in place has to support quality control rules and has to support user-defined transformations so where you say oh if you see this kind of data please transform it into something more standard um so simple example nas get translated into nulls or um you know visit names get mapped to kind of a canonical set of visit names you need to be able to implement and codify those rules as a part of these workflows and even with that things will fail machines fail network connections fail file systems fail all kinds of things fail permissions fail you know it could be user it could be hardware but things fail and you need notifications to inform you that when things have gone poorly and then you need the ability to fix that and and retry and then even after you get the data in you need more qc validation right after acquisition you need to be able to view the data see if there are any problems and you know annotate any of those problems and then fix them and then you know finally we talked about partitioning data and and providing access to that data the automation only works and is only efficient if all the data metadata that gets automatically imported ends up in the right place um so you need a way to make sure that data gets directed to the appropriate partition so i guess i'll stop and see if there are any any questions no no questions yet okay so integrating all this disparate data what that means as i mentioned before you know i gave kind of a clinical trial example earlier um where samples and assays and clinical data are all joined and aligned based on participant and visit that's sort of the classic clinical trial example so that you know i can very easily see clinical data associated with assay data clinical data associated with the samples that were collected and write reports and queries that um analyze the data with that alignment in mind so that's an example other other examples you know you you need to you need to have a system that is smart enough about this data um and in some cases you know now uh we use clinical ontologies to annotate that data and to be able to make smart connections between data so that we know based on clinical ontology concepts that we've annotated that these data in in these separate data sets are really the same thing they are comparable we can create a meaningful chart uh between all of this different data if the system doesn't have any knowledge about what the data means then it's a fairly dumb system right you need these these um semantic clues so the system can help you can help you normalize data uh harmonize data and align your data in in smart and meaningful ways so with regard to data analytics you really need to talk to your constituents who's going to be using the system what tools do they use what languages do they use what apis do they need you need to get that information first before you do an evaluation of the system and whether it meets those needs there are some systems that support a single api or a single language uh i would stay away from those systems you want you want to see you know as wide a diversity as as possible with regard to downstream adelaide tools um so typically there will be you know systems will have some built-in reporting built-in analytics maybe visualization statistics etc um which is great you know evaluate that and and you know and see how well that stacks up to your needs uh but then invariably you know no matter what you do there are those uh you know data scientists and bioinformaticists and um data analysts who will want to use their own tool they'll want to use r or sas or tableau or you know some other tool you know maybe a tool that can be connected via odbc or jdbc which are standard apis or maybe they just want to use python uh or java or some other language again assess your needs by talking to your constituents and then look for a system that can address those that can support those particular tools all right so that last point was really about uh the vendor and and whether the system is actively developed um i'm sure you've all seen systems that are out there being sold that are not under active development they're pretty static they may be marketed and sold very actively but the system itself is done it's been built and nobody's maintaining it nobody's enhancing it certainly nobody's developing it um i would suggest you avoid those systems um you know and if you look at that user interface and it seems tired and hard to use well that probably means you know the system is also outdated doesn't have a modern architecture um probably you know it's not going to evolve and grow it's just a static piece of software so i would encourage you to um engage with a software partner who's really invested in your success you know who who has incentive to make sure that you meet all your needs and requirements and goals you know what you want to look for is a system that has frequent upgrades good support has training has the option of hosting if you need that um if your in-house uh capabilities um you know might grow and evolve or or might be lacking um you know you want to see software that is enhanced regularly and has fixes and you know i added this last bullet point here again one of these things we could discuss a little bit but i'm i'm kind of wary of open-ended pricing models and what i mean by that is a system that gets more and more expensive the more successful you are um so as you add users maybe there's a per user licensing model where every user you add you pay more money that's going to that's friction right that's going to make it more difficult for you to be successful because it's going to cost you money the more successful you are the more more cost so i tend to prefer systems that you purchase them you make an investment um and that investment pays off and is amortized the more people you that use the system you know you sort of amortize that cost across all the all the users um that's my my preferred pricing model for these systems so you might wonder how do you get answers to all of these all of these questions you know i i listed a lot of factors that that you should be evaluating on or ideally so how do you get these answers well you know you have to ultimately go to the vendor and go to these systems and try them out and ask questions you know one of the first places to go of course is marketing materials so the website the slide text case studies videos um do those resources directly address those those six principles that i outlined um if they don't that's a huge red flag now let's be clear this is these are marketing materials right they can say whatever they want to say um and it's much easier to add a bullet point to a website or a slide than it is to actually develop that feature inside of the system um so you can't take it at face value but what i'm saying here is that if the marketing materials don't even mention security compliance scalability quality control um etc then that's just a huge huge red flag for you okay what else can you look at well do they make a trial version available that you can just download and use or you can launch in the in the cloud and get answers to the questions yourself if they don't provide that or if it clearly does not answer those questions or it's clearly lacking in some of these ways again that's a red flag so another resource of course are sales people um are they you know the sales people that you're engaged with are they answering questions are they addressing these six principles are they answering the questions to your satisfaction um again if if not that's a red flag and again sales people they sometimes stretch the truth a little bit so don't take it on you know at face value but you know if they haven't even if they don't have an answer for some of these questions that's that's certainly a concern and then one of the last pieces is um and one of the most important ones i think are referrals does this vendor provide referrals to satisfied customers um who can answer the questions that you're asking um that is a great resource definitely ask for those um and i'll just say i know some of the folks on this call today have provided those referrals for us for lab key and huge thank you for doing that um super valuable to us but even more valuable to um you know to your colleagues who are evaluating different systems including labview so we we appreciate uh your efforts there so this is the only slide where i really talk about key server and it's not really the focus of this presentation but you probably won't be surprised to hear that lab key server stacks up pretty well against these principles i mean these are the principles we've been using over the last 17 plus years as we've developed black key server and addressed the needs of hundreds of different research organizations um and so not surprisingly we have very robust security with the kind of data partitioning and organization that we talked about role-based access compliance controls um and integration with institutional systems um we really as you know as i indicated up front you know we've really designed this system from the start with scalability and flexibility at the core um we spent a huge amount of effort on automating data acquisition with multiple qc mechanisms um we have uh architected the ability to integrate with large volumes of data and really diverse data types that's that's a key for lab key you know we are we call ourselves at times a data integration platform right that means bringing many many different types of data together and integrating uh those data in in smart ways and then you know more recently in the last year or so we have added very rich support for clinical ontologies for both importing clinic clinical ontology information so actual concepts clinical concepts as data but also the ability to annotate all data in the system with uh with clinical concepts and you get to choose which ontologies you wish to use it's a very general purpose system you import the ontologies that are important to you and make use of those within your different partitions of the data we have also invested very heavily in supporting all the popular data analytics systems all the apis all the languages so all popular languages jdbc odbc and then you know all of the analytics systems like spotfire and tableau and access and um stata matlab etc they're all integrated with lab key so that your constituents again can get at the data in the form that makes sense to them and then you know lastly you know that team that i mentioned up front uh we have uh 32 engineers um working every single day to enhance the lacquer server platform and the livekey applications um to to make sure that you know we're keeping up with um the most recent developments that we're enhancing the system that we're making it more scalable more flexible more performant we're addressing the needs that each of our clients have and ask us to address we're doing that that is really our business and we have a dedicated services team um really world-class services team that provides all the training and the best practices and you know the help and support um that you need so that's that's what i have to share um again we'll see if we have any any questions at this point yeah we have a couple of questions um someone asks uh how do we transform the data to medical standards like c discs in a right so um you know lab key you know i can i can kind of speak to lab key as opposed to other systems certainly there are systems out there that um have built-in cdisk support um you know in the case of folks using lab key oftentimes they will you know this is an important piece about the data analytics data analytics and integrations um we have integration with sas um and so i know several of our clients will use that sas integration to transform the data into c disk formats right because sas has that capability built in so that's one example um you know there are other cases where uh folks will use you know a custom you know custom script written in say python to transform their data into formats that are important to them labkey has a built-in study archive or folder archive format um that we've at least talked about using that system or sort of shifting that and adding um adding a provider for c disk potentially um it's not supported today but yeah so i would say today that you know the most important way is to integrate with some of these other systems that already support those formats and and i can actually speak to this in my portion i have a demo which is relevant here another question um how does lab labkey handle data at scale are there upper limits for high throughput data at scale for lab key so yeah the way we handle that i mean there you know there's a whole pipeline in order to get data into the system so so in our case you know we oftentimes will use file watchers i you know i referred i think uh steve might be showing some of this yeah but as an example you know we use a file watcher in order to automate the intake of data without requiring any programming um so that's that's important it's important to me um certainly you can write code to get data into the system but you don't have to um so using a file watcher that can kick off a pipeline and then you know first of all that pipeline needs to be very efficient in high throughput and so we have invested heavily in the pipeline piece and our our data iterator infrastructure in order to very quickly and efficiently get data parsed and transformed and those qc steps [Music] invoked along the way so it's important to have those but it's also important that those be efficient and then you know there are decisions to be made that you can make in terms of what data gets actually imported into a relation the relational database on the back end of lab key versus what data remains in the file system or in s3 or some other um you know blob storage um and that's fairly key you know because you know you don't want to necessarily pull all the raw data into relational database if you're not going to query it you know i always say only put the data into the database if if you need to query that data if you just need to hold raw information or say reads from you know from a sequencer those can just reside in a file so so making smart decisions about where the data ends up is key and then for the data that goes into the relational database um that really you know we are we're relying on those relational databases to um to be efficient and to be scaled up and and fortunately you know we use um largely used postgres although we support other databases as well but postgres is a good example of uh a relational database that you can scale up to fairly massive scale if you need to um and that affects in both both the efficiency of um storing the data but also uh retrievals and we you know when we host lab key on behalf of our clients we typically post in aws and use rds and we have the ability to scale that up fairly well so you know i'm not taking a lot of credit for that i mean that's really on the postgres side but you know you've got some you know we're we're basically leveraging a lot of great database um expertise on the part of of these other teams um and you know again that's a case where we are always um always keeping up and maintaining labview's ability to connect to the latest version of all of these systems you know whether it's java or tomcat or a relational database like postgres you know we want to make sure we're taking advantage of and giving you the ability to take advantage of the latest and greatest developments by those teams as well

Show more