How To Use Sign in Banking

Make the most out of your eSignature workflows with airSlate SignNow

Extensive suite of eSignature tools

Discover the easiest way to Use Sign in Banking with our powerful tools that go beyond eSignature. Sign documents and collect data, signatures, and payments from other parties from a single solution.

Robust integration and API capabilities

Enable the airSlate SignNow API and supercharge your workspace systems with eSignature tools. Streamline data routing and record updates with out-of-the-box integrations.

Advanced security and compliance

Set up your eSignature workflows while staying compliant with major eSignature, data protection, and eCommerce laws. Use airSlate SignNow to make every interaction with a document secure and compliant.

Various collaboration tools

Make communication and interaction within your team more transparent and effective. Accomplish more with minimal efforts on your side and add value to the business.

Enjoyable and stress-free signing experience

Delight your partners and employees with a straightforward way of signing documents. Make document approval flexible and precise.

Extensive support

Explore a range of video tutorials and guides on how to Use Sign in Banking. Get all the help you need from our dedicated support team.

Keep your eSignature workflows on track

Make the signing process more streamlined and uniform

Take control of every aspect of the document execution process. eSign, send out for signature, manage, route, and save your documents in a single secure solution.

Add and collect signatures from anywhere

Let your customers and your team stay connected even when offline. Access airSlate SignNow to Use Sign in Banking from any platform or device: your laptop, mobile phone, or tablet.

Ensure error-free results with reusable templates

Templatize frequently used documents to save time and reduce the risk of common errors when sending out copies for signing.

Stay compliant and secure when eSigning

Use airSlate SignNow to Use Sign in Banking and ensure the integrity and security of your data at every step of the document execution cycle.

Enjoy the ease of setup and onboarding process

Have your eSignature workflow up and running in minutes. Take advantage of numerous detailed guides and tutorials, or contact our dedicated support team to make the most out of the airSlate SignNow functionality.

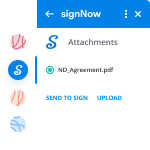

Benefit from integrations and API for maximum efficiency

Integrate with a rich selection of productivity and data storage tools. Create a more encrypted and seamless signing experience with the airSlate SignNow API.

Collect signatures

24x

faster

Reduce costs by

$30

per document

Save up to

40h

per employee / month

Our user reviews speak for themselves

Utilizing Sign In for Banking

Understanding how to utilize sign in for banking is crucial for safely handling your online financial transactions. With airSlate SignNow, you can optimize your document signing workflow while maintaining compliance and security. This guide will lead you through the process of effectively using airSlate SignNow to improve your banking experience.

Utilizing Sign In for Banking with airSlate SignNow

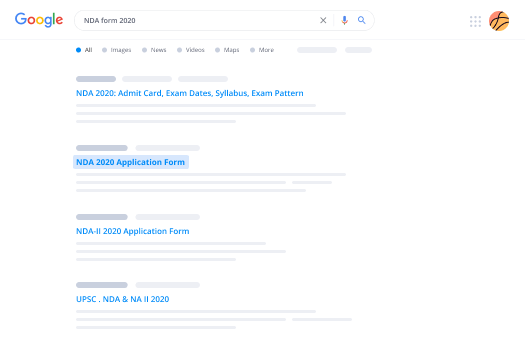

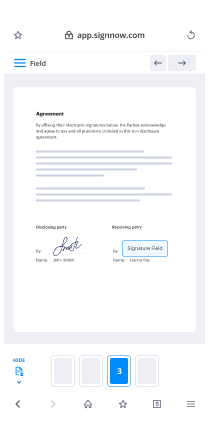

- Launch your web browser and go to the airSlate SignNow homepage.

- Register for a complimentary trial or log in if you already possess an account.

- Upload the document you intend to sign or send to others for their signatures.

- If you anticipate using the document often, consider saving it as a template for future reference.

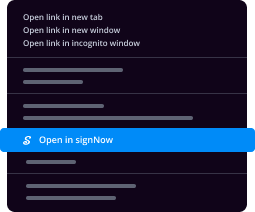

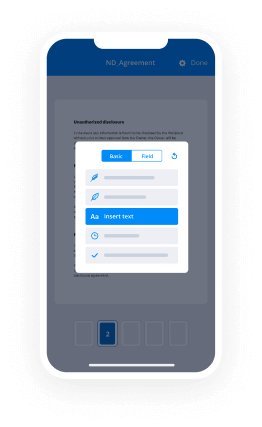

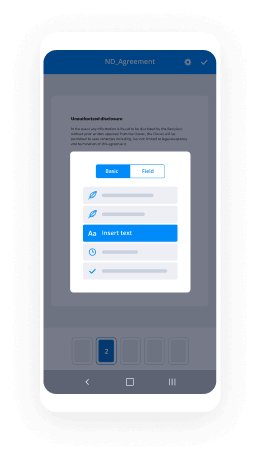

- Access your uploaded document to make necessary modifications, such as adding fillable fields or inputting required information.

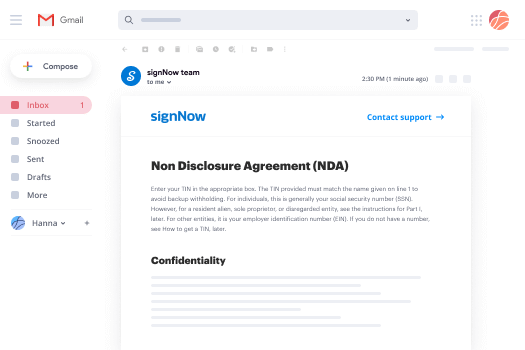

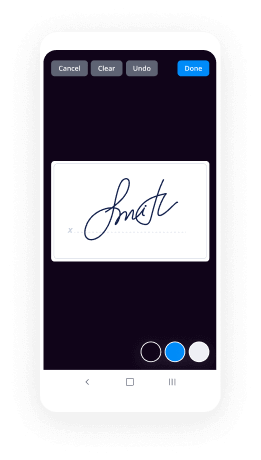

- Sign your document and assign signature fields for your recipients.

- Click 'Continue' to set up and send an eSignature invitation.

By adhering to these steps, you can leverage the capabilities of airSlate SignNow for your banking requirements. Its user-friendly interface and comprehensive features make document signing a seamless process.

Prepared to streamline your document signing experience? Start your complimentary trial with airSlate SignNow today and see how it can enhance your banking operations!

How it works

Find a template or upload your own

Customize and eSign it in just a few clicks

Send your signed PDF to recipients for signing

Rate your experience

-

Best ROI. Our customers achieve an average 7x ROI within the first six months.

-

Scales with your use cases. From SMBs to mid-market, airSlate SignNow delivers results for businesses of all sizes.

-

Intuitive UI and API. Sign and send documents from your apps in minutes.

A smarter way to work: —how to industry sign banking integrate

FAQs

-

What is airSlate SignNow and how does it relate to banking?

AirSlate SignNow is a powerful eSignature solution that allows businesses to send and sign documents securely. When considering how to use Sign in banking, it's essential to understand that this platform streamlines the document process, enabling banks to obtain necessary signatures efficiently and securely.

-

How to use Sign in banking for document management?

To use Sign in banking for document management, first create an account with airSlate SignNow. You can then upload your documents, send them for signing, and track their status in real-time. This process simplifies compliance and enhances the overall efficiency of banking operations.

-

Is airSlate SignNow suitable for small banks and credit unions?

Yes, airSlate SignNow is highly suitable for small banks and credit unions looking to improve their document workflow. By learning how to use Sign in banking, these institutions can benefit from cost-effective eSignature solutions that enhance customer service and reduce processing time.

-

What features does airSlate SignNow offer for banking professionals?

AirSlate SignNow offers several features specifically beneficial for banking professionals, including customizable templates, secure cloud storage, and advanced analytics. Understanding how to use Sign in banking allows teams to create tailored documents quickly, ensuring compliance and improving customer interactions.

-

How does airSlate SignNow ensure the security of banking documents?

AirSlate SignNow prioritizes security through robust encryption and compliance with industry standards such as HIPAA and GDPR. When exploring how to use Sign in banking, you can trust that your documents are protected during the signing process, ensuring confidentiality and integrity.

-

Can airSlate SignNow integrate with other banking software?

Yes, airSlate SignNow can seamlessly integrate with various banking software and CRM systems. Learning how to use Sign in banking includes utilizing these integrations to enhance your existing workflows, making document management more efficient and streamlined.

-

What are the pricing options for airSlate SignNow in the banking sector?

AirSlate SignNow offers flexible pricing plans tailored to meet the needs of various banking institutions. When considering how to use Sign in banking, it's beneficial to explore these options to find a plan that aligns with your organization's size and document volume.

Trusted esignature solution— what our customers are saying

be ready to get more

Get legally-binding signatures now!

Frequently asked questions

How do you make a document that has an electronic signature?

How do you make this information that was not in a digital format a computer-readable document for the user? "

"So the question is not only how can you get to an individual from an individual, but how can you get to an individual with a group of individuals. How do you get from one location and say let's go to this location and say let's go to that location. How do you get from, you know, some of the more traditional forms of information that you are used to seeing in a document or other forms. The ability to do that in a digital medium has been a huge challenge. I think we've done it, but there's some work that we have to do on the security side of that. And of course, there's the question of how do you protect it from being read by people that you're not intending to be able to actually read it? "

When asked to describe what he means by a "user-centric" approach to security, Bensley responds that "you're still in a situation where you are still talking about a lot of the security that is done by individuals, but we've done a very good job of making it a user-centric process. You're not going to be able to create a document or something on your own that you can give to an individual. You can't just open and copy over and then give it to somebody else. You still have to do the work of the document being created in the first place and the work of the document being delivered in a secure manner."

How do i sign a pdf file?

a) go to File > New > Page, select the PDF to create a page.

b) then click "Save as New Page".

c) now you can click on the pdf and the pdf file will be copied to your hard drive. The pdf file will be available on your computer as

e) go to the location where you saved your document. pdf

f) select the file from your computer and click on the save as option.

g) after you save it you can go to the location where you saved the document. pdf

h) then you can select the file and click on the "Open" option.

i) then you can read it. pdf

j) if you want, print the file.

i) then you must click on the "Open" button to see the contents of it.

j) you don't use the "Save As New Page" option to get the pdf file to your hard drive, you save it to the location where you saved the document.

i) then you can open the document. pdf

l) then you have to do what i have to do to the document. PDF.

Moral of the story is: if you want to print something from a PDF file, you should save the file to your hard drive first. If you can't print, then use a printer.

What is an electronic signature on the computer?

It is a set of digital characters. The digital character is the mathematical representation of a set of letters. There are a finite number of characters which are called "alphanumeric characters"[1].

The key is to make it so the computer can easily tell if a given message is in fact an electronic signature on the computer. This can be done with encryption and signing. Encryption and signing make it so that a person's electronic signature can only be decrypted with someone's electronic signature in the corresponding encrypted message. The following is a simple encryption routine that encrypts a string of characters:

A1 := "HEY BUDDY" ; the first character A2 := "HEY BUDDY" ; the second character A3 := "HEY BUDDY" ; the third character A4 := "HEY BUDDY" ; the fourth (and last) character A5 := "HEY BUDDY" ; finally, the key "B" is made up of the remaining characters.

Now for the signature. The following is a simple signature routine that signs a string of characters:

A1 := "HEY BUDDY" ; the first character A2 := "HEY BUDDY" ; the second character A3 := "HEY BUDDY" ; the third character A4 := "HEY BUDDY" ; the fourth (and last) character A5 := "HEY BUDDY" ; finally, the key "B" is made up of the remaining characters.

The following is a sample message: "HEY BUDDY"

The following is the signature: "BUDDY"

This shows that both messages have the same signature. However, the first message has been encrypted, which is not necessary for a signature.

The following will be discuss...

Find out other How To Use Sign in Banking

- Unable to download imm5710 form

- Ieee copyright and consent form

- Condonation of delay form

- Nhif forms

- Lomautusilmoitus 281088782 form

- How to fill consent and indemnity form

- Samaritans purse volunteer agreement form

- Remonstration letter to german embassy sample form

- 1099 g form

- Dda form kwsp

- Crystal stairs form

- Anne arundel county zip codes map form

- Khallikote university migration form

- Sss guarantors form

- Residential resale condominium property purchase contract form

- Petition for writ of amparo sample philippines form

- Utah drivers license application pdf form

- Adams gift certificate template form

- Nps withdrawal form

- Supplier accreditation form