Assure Validated Field with airSlate SignNow

Do more on the web with a globally-trusted eSignature platform

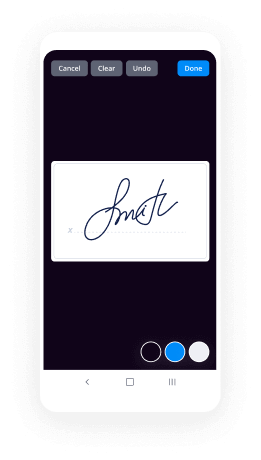

Remarkable signing experience

Robust reports and analytics

Mobile eSigning in person and remotely

Industry rules and compliance

Assure validated field, quicker than ever

Useful eSignature add-ons

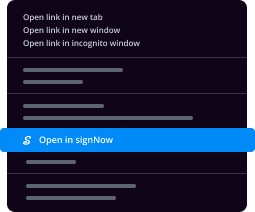

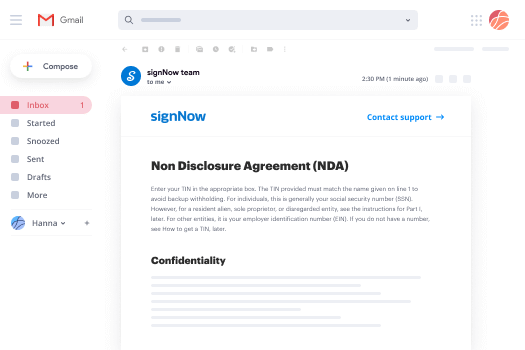

See airSlate SignNow eSignatures in action

airSlate SignNow solutions for better efficiency

Our user reviews speak for themselves

Why choose airSlate SignNow

-

Free 7-day trial. Choose the plan you need and try it risk-free.

-

Honest pricing for full-featured plans. airSlate SignNow offers subscription plans with no overages or hidden fees at renewal.

-

Enterprise-grade security. airSlate SignNow helps you comply with global security standards.

Your step-by-step guide — assure validated field

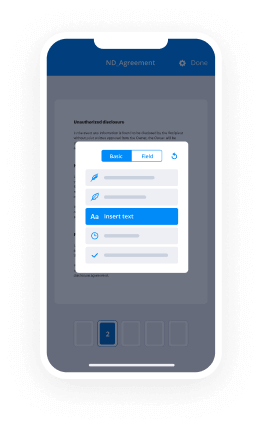

Adopting airSlate SignNow’s electronic signature any business can increase signature workflows and sign online in real-time, giving an improved experience to customers and employees. assure validated field in a couple of simple steps. Our handheld mobile apps make operating on the go achievable, even while offline! Sign signNows from any place worldwide and close trades in less time.

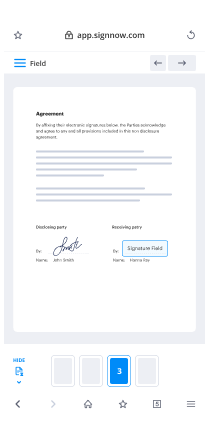

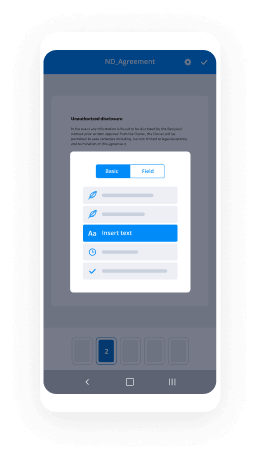

Keep to the stepwise guide to assure validated field:

- Sign in to your airSlate SignNow account.

- Locate your document within your folders or upload a new one.

- Open the template adjust using the Tools list.

- Place fillable boxes, add text and sign it.

- Include several signees using their emails and set up the signing order.

- Indicate which recipients can get an executed copy.

- Use Advanced Options to restrict access to the record and set an expiry date.

- Click Save and Close when completed.

In addition, there are more innovative capabilities open to assure validated field. Include users to your shared work enviroment, view teams, and keep track of teamwork. Numerous users all over the US and Europe recognize that a solution that brings people together in a single holistic digital location, is the thing that organizations need to keep workflows working easily. The airSlate SignNow REST API allows you to embed eSignatures into your application, internet site, CRM or cloud. Try out airSlate SignNow and enjoy faster, smoother and overall more productive eSignature workflows!

How it works

airSlate SignNow features that users love

See exceptional results assure validated field with airSlate SignNow

Get legally-binding signatures now!

FAQs

-

What is field validation?

Validation rules verify that the data users enter in a form meets the standards you specify before the form can be submitted. A validation rule contains an expression that evaluates data entered in one or more fields and returns a boolean value. -

What is validation testing with example?

Validation Testing, carried out by QA professionals, is to determine if the system complies with the requirements and performs functions for which it is intended and meets the organization's goals and user needs. ... Validation is done at the end of the development process and takes place after verification is completed. -

What is field level validation?

Field- level keyboard events allow you to immediately validate user input : KeyDown, KeyPress, KeyUp. Form-level validation is the process of validating all fields on a form at once. it is usually called when the user is ready to proceed to another step. -

What is validation and types of validation in asp net?

ASP.Net provides various validation controls that validate the user data to ensure that the data entered by the user are satisfied with the condition. ASP.Net provides RequiredFieldValidator, RangeValidator, CompareValidator, RegularExpressionValidator, CustomValidator and ValidationSummary. -

How many validation controls are there in asp net?

There are six validation controls available in the ASP.NET. By default, the validation controls perform validation on both the client (the browser) and the server. -

How do you validate a form?

Basic Validation \u2212 First of all, the form must be checked to make sure all the mandatory fields are filled in. It would require just a loop through each field in the form and check for data. Data Format Validation \u2212 Secondly, the data that is entered must be checked for correct form and value. -

What is the difference between validation and testing?

Verification is a process in which a design is tested (or verified) against a given specification before manufacturing. ... Validation is a process in which the manufactured design is tested for all functional and electrical correctness in a lab set up. -

Why form validation is required?

Form validation is required to prevent web form abuse by malicious users. Improper validation of form data is one of the main causes of security vulnerabilities. It exposes your website to attacks such as header injections, cross-site scripting, and SQL injections. -

What is verification and validation in manual testing?

Verification is the process of evaluating products of a development phase to find out whether they meet the specified requirements. Validation is the process of evaluating software at the end of the development process to determine whether software meets the customer expectations and requirements. -

How do you create a form in HTML?

The -

What is requirement verification and validation?

Requirements Verification and Validation. ... Validation is the process of confirming the completeness and correctness of requirements. Validation also ensures that the requirements: 1) achieve stated business objectives, 2) meet the needs of stakeholders, and 3) are clear and understood by the developers. -

How do you validate information?

To validate data, appropriate tests need to be run, such as running the data through business cases, usability testing, and case models. To validate fluctuating data, appropriate meetings can also be set up to establish and authenticate the information, such as when you need up-to-date information for a status report. -

What is validation in quality assurance?

\uf0d8 Validation is \u201cEstablishing documented evidence that provide high degree of assurance that specific process will consistently produce a product meet its predetermined specification and quality attributes. \uf0d8 This is to maintain and assure a higher degree of quality of food and drug product. -

How do you ensure validity in research?

When the study permits, deep saturation into the research will also promote validity. If responses become more consistent across larger numbers of samples, the data becomes more reliable. Another technique to establish validity is to actively seek alternative explanations to what appear to be research results. -

What is validation and qualification?

Validation is an act, process, or instance to support or collaborate something on a sound authoritative basis. Verification is the act or process of establishing the truth or reality of something. Qualification is an act or process to assure something complies with some condition, standard, or specific requirements.

What active users are saying — assure validated field

Related searches to assure validated field with airSlate airSlate SignNow

Assure validated field

again i want to thank everybody for joining us for today's session entitled leveraging and power cds calculations to assure data integrity in your chromatography laboratory today's session is sponsored by astrix technology group next slide please before we get into the content and the presentation materials i want to first take a moment and introduce you to astrix technology group if you've not joined us for one of our webinars in the past you're in for a treat the content is always great and educational and we're glad to have you um we want to tell you a little bit about the company and who we are before we get into the presentations we are first and foremost an informatics professional services and strategic outsource solutions company dedicated to servicing the life science community uh we were established in 1995 the company is privately held uh it originated within the i.t division of a company called apbi which at the time was a 300 million dollar life science research organization today we operate from seven offices in the united states and costa rica with our headquarters in beautiful red bank new jersey who we help as companies are fortune 1000 life science enterprise chemical cpg government research institutions companies with large and fast growing i.t outsourcing compliance needs the mission of the company has and always will be to deliver scalable sustainable solutions for the scientific community now as far as the group that we're talking to today we're talking to the professional services group of asterisk technology group and you can go to next slide please and this group is responsible for scientific data systems we are the group that delivers the end-to-end services that span the complete life cycle of all scientific data systems in a laboratory this includes everything from the beginning part of these projects which is the business process analysis and the enterprise architecture this is where astrix is often hired to come in and help design an architecture of laboratory informatics technology and software and then once we do that we often participate in the actual selection of the vendors and serve and and those different software applications we work with a wide array of informatics vendors across a wide array of application types which you're going to hear from one of them today in waters so we we help to make sure that the solutions that you're choosing are the right solutions based on the size and based on the the makeup or any individual requirements that you may have and then from there we help with the development of the implementation and finally on the back end of that the computer systems validation work there's also the ability for us to help you manage these systems after the fact for our 360 degree laboratory system services and then if required we even have the capability to staff both scientific and technical resources in your organizations to help everything from the scientific side of the fence to the maintenance management and running of these actual applications okay um with that we'll go ahead and move on to the next phase and that's introducing our speakers say so we're always grateful to have rina nunez and marcelo suarez with us so just to do a little quick introduction reenan is a lab informatics manager here at astrix technology group he works in the informatics professional services division his focus is on cds and sdms practice of the company he brings over 18 years of experience in lab informatics and during his careers worked on over 30 empower enterprise deployments as well as new genesis stms and lim's implementations marcelo is a senior informatics engineer here at astrix technology group and he brings over 14 years experience in lab informatics and analytical instrumentation with the scope work ranges from implementation to training to support third party system control with empower cds selection processes systems best practices and creation of custom calculations so you know certainly uh we've got two very good minds with us in today's presentation i want to go ahead and introduce marcelo i think you're going to kick us off and i'll sit back and enjoy the presentation all right thank you so much for the beginning right yes so uh this is one of our last few webinars this year and we decided to talk about chromatography related calculations so why do we feel this topic is important well chromatography is one of the most important analytical techniques for many companies out there and it can represent a huge amount of the workload in the lab so this means that the cds in our case here today in power will typically generate large amounts of data transcribing all that information to an external system can present huge risks from data integrity perspective data integrity is a major concern in the regulated industry and is also a critical part for the fda's mission to ensure safety and quality of the products on the market so today we're covering these topics here what is data integrity so we will explore what's the definition of the integrity and how that impacts calculation practices general considerations for calculations in scope of chromatographic calculations so we will define what are the characteristics for robust and compliant calculations and we will identify which calculations should be done in the cds and which ones should be avoided how to make sure the calculations are doing what they are meant to do so we will identify some of the pieces that you need to put in place to create evidence that they are doing what you expect [Music] benefits of having all chromatography related calculations in empower and why you should avoid using external applications for cts related calculations so we will talk about why cdis itself is often the best location for these calculations and what are the things you need to pay attention in case you need to do them externally so next slide please so to start off what is data integrity so as per fda data integrity refers to the completeness consistency and accuracy of data complete consistent and accurate data should be attributable legible contemporaneous original and accurate so these five words together will form the famous acronym alcoa and what all those words mean so attributable means that the data generated or collected must be traceable back to the individual who generate information pledgeable that the data recorded must be readable and permanent contemporaneous means that the results or measurements must be recorded at the time the work is being performed original means that the data points to where it was originally observed and accurate that data recorded must be complete consistent truthful and represent the facts so the acronym alcoa was then expanded to alcoa plus which added four more concepts to the original idea and you can see them highlighted in yellow here in this line so complete meaning that information that is critical to recreating and understanding a particular event is present so this would include any repeat re-test or re-analysis that were performed uh consistent that the data are presented recorded dated and timestamped in the expected sequence enduring uh data must be maintained intact and accessible throughout the lifecycle and finally available that the data or information must be able to be accessed at any time during the defined lifetime of the period of the record so next slide please so general considerations for calculations so having the date integrity concepts in mind we will discuss how empower can help you address the integrity concerns associated with human interaction with data and we'll show you how deep and power can deliver calculations in a controlled environment so it's worthwhile for us to start the discussion by defining the characteristics of calculations to be performed in a robust and compliant manner so one uh the calculations must be accurately reflect through reality of the test two the calculations in any eventual change either before or doing the result calculations must be documented and available for review at all times three ideally the calculated values should not be seen by the analysts until it has been recorded in permanent medium so this principle here is to prevent users to pick and choose what inputs will generate a result to fit their needs four after in any initial calculation history made the history of changes made to factors dilutions and weights using the calculation must be preserved in another trade so for example you're calculating an assay and you get a result of 200 so you stop for a moment you review your inputs and you see that you had an incorrect pollution factor entered in the system so you would have to change that direction factor right so the system should be able to capture and preserve these changes you made along the way and lastly all factors and values using the calculation must be traceable from the original observations to define a reportable source we will discuss where else chromatography related calculations could potentially be done moments later in the presentation but no matter where they're happening it must always be possible for you to see the original data and original outcome in addition uh there must be transparencies of changes made to values are along the way are easily captured for uh for review okay so next slide myself so scope of chromatography calculations so the obvious things that will come to mind when talking about chromatography related calculation are the calculation of the actual reportable results from the run so let's say amount percent impurity total label claim uh dissolution results and also calculation of any system suitability task parameters an example of this would be percent recovery bracketing standard agreement retention time differences between multiple replicas etc the overall best approach uh is to keep all chromatography related calculations in power and ideally we should try to focus on only having calculations that will generate numeric values uh built in and power we will expand on some of the reasons for this in a moment uh next slide so empower is able to handle multiple calculation requirements with all functionalities but in several occasions additional custom fields are required and and this is really one of the one of the things that making power so special right it's flexibility so custom fields are fields that you can create in a power they're either input fields or calculations and they allow the users to input minimal data so let's say um recovery factor secondary standard dilutions label clay and so on and then receive an output of a calculated result using empowered database values so here we have an example of calculation uh requirement that empower would not be able to satisfy using default functionality so a custom field was created in this case here it's a ratio between the average area and the standard weight of two different standard preparations so i'm sure many of you can relate to this requirement that this is uh this calculation is very common to assure good standard preparation and the result to be expected here is around 100 percent uh next slide myself so exactly because empower tools for calculations are so powerful uh after many users start getting knowledge of the system understanding its capabilities they tend to start getting creative so one classic representation of this creativity is to use empower custom fields to do specification assessments such as s or fail look at this example here in the screen so if the numeric outcome of a calculation percent agreement here in this case falls within the range of 98.0 to 102.0 and power will translate the assessment to pass if it falls outside this range it will translate to fail in our situation here the result was 100.8 uh and we see that the assassin translated that to pass there's nothing wrong with having this calculation in power it's it's it looks beautiful i understand but it's important to remember what's really the most important thing to get out of your empower calculations and what's the level of effort you need to put in to achieve this right so even though it's perfectly possible to build these calculations in power we're seeing right there in the screen right there are systems more suitable for these particular test such as a lens for example so chromatography is just one of the many techniques you find in a typical lab so that means that other instruments are being managed outside of empower so the system that controls these other instruments could potentially not have the same tools as empowered so you would need to have a separate system to do this final specification evaluation anyways so my point here is that you could be putting in too much time and effort into something that will not solve all your problems don't forget whenever you start creating calculations or fields in the power to satisfy requirements somehow you need to create evidence that those are doing what they expected to do and with that i'll hand over to my son okay thanks hannah so now that we've seen that empower has lots of functionalities and lots of possibilities for calculations so i've been integrity in mind how can we make sure that whatever calculations i've created are doing what they were meant to do and that capture enough evidence of that so key questions to think about this so does the formula that i created meet my method requirements is the output of this calculation reporting the corrected value if this is supposed to give me 100 is it doing like this is it agreeing to certain decimal places numbers or how should i test it to make sure that it is doing what i mean it should do so a couple of things you need to have in mind to make sure that your calculations are doing what they were meant to do having their integrity in mind so first of them create an sop so having procedure set in place is the best way to guarantee your consistency during your development review and validation and maintenance of your formulas so before you start adding any customized calculations within power first you need to set a proper environment for that to happen so creating one or maybe multiple sops is the way best way to start as guarantees not only a proper life cycle of developed calculations but also sets the empire current environment in terms of user permissions in the organization before you start so some things that can be answered while you're building your sop for that process how many testing environments must be used should i have let's say a dev server a broad server a validation server if i don't have the resources or bandwidth for that i can do all in one server but i have to make sure that data is separated so i don't accidentally tamper with production data while doing development work so those are things that while you're building your sop you can figure it out so another thing which permissions should a user have to develop custom calculations without compromising their integrity let's say for example report methods you want to make sure that whatever you create a report method you have the proper level of of documentation to make sure that this works correctly and only for the designed use of that and for the users who need to control that another thing how to operate with data exported from external systems some labs already having power and they can start building customized calculations based on sops other labs are coming from different cds systems chilling power let's say open lab or chromium or any other cds in the market so they do not have any injections on their newly installed in power so they will probably need to export data from those outside systems to empower to work with developing calculations so this can be accounted as well as part of your preparation and the sop for that operation another point in mind uh what is the required level of document approvals should i get approvals from qcqa who should sign and who should be accountable for those documentation and last but not least what are the expected deliverables for each new calculation development should i capture screenshots should i get manual calculations how many documents should i have as some evidence of this development and testing of custom fields so about that create and collect solid documentation so the best way to document customized calculation development is to separate development efforts into smaller sets of documents so instead of doing one huge validation of all calculations within power you break that into small sets of work so it gets manageable so one of the best ways we've been doing for other projects that we've been working with is separating that into test methods so for each test method in your laboratory there is a small set of formulas and calculations that go with that so you break that into small sections for test methods and you do small cycles of validation you create a protocol validate for that just method approve that and you move forward and you move forward so it gets easier to manage that the objective of these sets of documents is to demonstrate that proposed customized calculations execute exactly the way the analytical test method requires you your analysis should be calculated so some of these documentations that you need to create and collect as part of your calculation effort are but are not limited to let's say for example a work instruction you are creating a new calculations in empower so people need to operate the system in a certain way so calculations can produce the same results for every analyst so it's good to have for example work instruction created to assist the lab users on how to operating power in a consistent manner producing consistent results another document that is very helpful when you're working with leveraging empower calculations is a calculation design specification uh you have to think that not everybody knows how to create customized calculations within power there's a kind of a learning curve to do that but you have to at least let people know what a family is supposed to do so a calculation design specification is a good document for example a sound document that shows people what a determined formula is is designed to do what is the outcomes that come from that formula so even if the person does not know how to create custom fields themselves they don't know how to read the formula written in the documentation they know what to expect from that formula and they know what needs to be checked if the formula does that or not so it's good to help reviewers understand what customized firmwares are developed for and what are their expected outcomes another thing that you need to make sure that your calculations are doing what they were supposed to do is having a solid testing strategy so visualizing what your testing plan is visualize visualizing what your testing plan is before you implement the formulas is key to fully understand how to proceed with your testing so take a as an example this formula here in this slide this is the exact same form of the henon showed a couple of slides back this formula is supposed to give you pass if your values are between 98 and 102 or fail if it's outside of that that range so thinking about a testing strategy for this formula how would you test this to make sure that this is working and how to document that so by looking at this formula what are the possible outcomes from this uh from looking at this i we can see at least five outcomes values that are below 98 between any 102 above 102 percent and values that are exactly 98.0 and 102.0 so those are the possible outcomes of this formula and i need to make sure that the formula is working for all of those guys so this is what a testing strategy is figuring out what is supposed to be done with this formula and then figuring out how you're going to test this and document this so what is a way of testing that this formula is working this is using the recovery calculation right there the percent agreement and this is using area and sample wage for example you could one way of adjusting this would be changing the sample weights so the calculated value falls within all those five conditions so that means you would need to process sample sets multiple times to get the values to reflect what you are trying to test another way to do this would be having multiple sample sets with values that fall within those situations but that can be a little bit difficult to achieve the 98.0 exact value or 102.0 so this is a second way of doing this a little bit more difficult to achieve but if you sit down first build your testing strategy up front understand that on paper it's the best way to figure out how to do and how should you document that so how to document that in this case as this is just a pass and fail formula one way of doing this would be screenshots capturing the value reported in the percent agreement calculation and the percent agreement assessment right beside it showing the best and fail under that condition another thing to make sure that your formulas are doing what they were supposed to do one key topic to work during validation of customized calculations is the option to leverage previously validated fields and formulas so if allowed by the approved sop you built approach any customized fields and calculations validated in previous protocols can potentially be reused in multiple projects what does that mean let's say for example let's take one formula that is known by everybody like a person over label claim you have your calculated value divided by the expected value times 100. basically almost all laboratories have some kind of formula like that and this is pretty much repeatable on multiple methods so one way you could address this is you validate it once at the first time just from appears get all your manual calculations check if it works if it meets what the method expects document that validation so when you get your second test method that also uses the same formula instead of revalidating everything again you can have your sme on your lab reviewing that calculation to make sure that it accommodates to whatever is needed for that method making sure that is it is still applicable under the new premise of the new method so then you don't need to revalidate everything over again you're still asking for someone to look at that for you and making sure that this is still working but you don't have all the effort of revalidating everything over again so you gain momentum and you make your your validation effort much faster with that another thing to make sure that your calculations are doing well they're meant to do follow change control processes so when a test method or procedure they have associated custom fields is changed after validation i'm not just talking about the formulas but let's say the analytical method change by some reason and that changes the limits of your test or the way you should calculate things you now you not only need to pay attention on what you need to change on your methods but also on the calculations are created on power so as part of this change control process an impact assessment must be performed within a change control to verify if any changes to calculations on your method might impose required changes on the formulas as well so once you put calculations on in power it is a live system so it is prone to changes as time goes by so you would you need to address these as a life system as you as you should so with that said with all this work and all these things that i need to do to make sure that calculations are good and doing what they were supposed to do under a daily integrity perspective so what is why is it good for me what are the benefits of having all chromatography related calculations within empowered cds what do i gain from that okay so one of the benefits is to decrease the number of incorrect results due to typos for system integration or intentional change so think about this think about this today in your lab how many interactions and manipulations your analysts have with your chromatography data from the moment the injection is done at the chromatographic system up until the moment you have the final reportable value how many steps does your analyst have to get from the original data transcribing that into an excel spreadsheet or making calculations on a form or making hand calculations on a scientific calculator and then transcribing that all back to your limbs or sap how many steps does it need for you to take from the chromatographic system up to the final reportable value that may vary from company to company but if today uh you don't have any related calculations on empower chances are you are using one or maybe a combination of electronic spreadsheets paper-based forms calculators etc so even though it's not forbidden to use such tools one must agree that the number of human interactions and by extension human errors can and will be much higher when compared to a fully automated option when we're talking about systems in general human error is top one source of of issues with typos or are such things so investing your time and efforts to embed your calculations within power not only addresses the integrity concerns but can also help your lab gain in productivity based on the additional points mentioned in the slides we'll have right after that so another benefit of having chromatography related calculations within empower is centralized data for easier review processes so uh one other question to think about so random mentioning the alcoa definition that data must be original so let's give an example so let's say you're going under a fj validation right now and you're asked to provide our original data related to specific analysis so think about your environment how many data repositories would you need to dig into to find the data you need to provide to the auditor would it be possible for you today to provide it in its completeness in a timely manner would you need to look for paper-based things that are storage somewhere else or would they be readily available for you so centralizing all chromatography related calculations in empower not only helps you have original data in a searchable readily available location but also speeds up routine review process so tools like result w trails and electronic signatures help your workflow happen much faster for this so this brings to the other point which is reduced with your life cycle so it's already have tools like resulted you try and electronic signatures so that that shortens your life cycle between signatures and approval of of batches it happens in a much faster way in electronic allowing you to focus on priority tasks so instead of transcribing data over and over again to multiple spreadsheets just to get you the final recovery calculation or percent over label claim you use that time that you will be typing data on another system to focus on next analysis so you gain productivity based on that you have of course overhead of developing the customized calculations but once you're done with that you gain that time back on your laboratory so but not everything is achievable on a cds lots of things are but the other side of the story is that there are calculations that are difficult to perform directly in empower or any other commercial chromatography system especially those ones that have data spread across multiple months or multiple sample sets let's say statistics across multiple simpler sets or stability studies that sometimes sit on different projects or data very far apart from each other trend graphics so these kinds of things they are not impossible but difficult to meet unempowered and very effort consuming to build so in these situations often a spreadsheet is used to perform these calculations for example but there are other alternatives to cds right so one of them for example is a lims or a neolin system so limbs and eln applications if configured correctly generally will have an audio trail capability from most analyst actions and have the capability to perform calculations that are problematic for chromatographic applications such as as mentioned before multi-simple set calculations and calculations like these are usually where a lens platform will shine really so that's the good part on a negative note the ability to interface these types of systems could create the integrity weaknesses that you need to make sure you address in terms of the integrity so to name one example uh let's say you have to send data from from impower out to one of those systems and for some reason you have to rely on flat files like a txt file or a csv file if not properly configured those data can be accessed and manipulate it externally using applications as simple as a notepad or a word document or an excel spreadsheet and then sent back to the links are yellen for calculation so you need to be vigilant and create mechanisms and evidence that this scenario can't happen you cannot have for example a txt or csv shared folder sitting on your network with no security set to it with any kind of user going into that and making edits through files on that folder so that's one thing to keep in mind another scenario big scenario for linux and yln is when you are manually transcribing information from one system to another let's say you do not have automated integration between them so you have to actually print the results and type in on the other one so anytime a human manages a numeric value there's a risk that digits in that number will be transposed in a small percent of transactions due to dipoles or something like that so that's one thing to have in mind third point to consider is that a limbs or eln can perform calculations such as linear regression for standard curves you could do that on an alien system but a lot more work is required to set up a calculations in the lens on your our uln versus the chromatography system so even though the limbs are yelling is more flexible the chromatography system is better suited for a process it performs relationally so bottom line uh if you are using a limbs or eon system to cover some of these calculations both systems are they are fit for certain purposes so for chromatography calculations nothing better chromatographic data system to deal with those so like creating calibration curves or making amounts or corrected amounts this is routine analysis for chromatography systems so nothing better than leaving chromatography system to deal with chromatography data and then additional things like trends or something like that or evaluation of requirements passing fail those are easily achievable on a limb system so the bottom line here is trying to keep each system with what is what they are designed to do what they are fit for purpose right so another option uh we still have spreadsheets so all companies at some point in time use spreadsheets so before performing any chromatography calculations with that spreadsheet let's remember the general considerations for calculations that hannah mentioned earlier and then answer this following question which of the general considerations are matched within a spreadsheet like control x's or other considerations that hannah mentioned near none it's hard to lock an excel spreadsheet in a way that people cannot tamper with that so it's a very complicated environment to work with so i'm sure many of you are thinking that a case could be made of using validated spreadsheets that might be true assuming someone else doesn't unlock the sheets and manipulate calculations which you would be surprised that it's incredibly easy to unlock an excel spreadsheet a couple of google searches and you would find answers to that and you could break that easily so although it might be more comfortable and user friendly to calculate results on a spreadsheet because spreadsheets accept basically everything it is definitely worth the effort to put those calculations within power uh in addition to provide no access control for all the trials an excel spreadsheet doesn't provide those by default a spreadsheet typically has manual data entries and permits an analyst for example to recalculate results before printing and saving the desired result values to the permanent batch record so people could be cherry picking putting values here and figuring out if that works for them if this is a password fail so you don't want that uh one way of working with spreadsheets without really only relying on nexo and giving a coach of protection over that would be some liens and eln systems permit analysts analysts to embed spreadsheets within the system to overcome the security limitations and missing out the trailer capabilities of desktop spreadsheets so if there's no other way around spreadsheets and they need to be used uh this approach of embedding your spreadsheets and putting intelligence over that and bringing that into an eel ending limbs to give you the added layer of security would be the preferable way to deploy them so to summarize this presentation we wanted to leave you guys with those final thoughts so dating integrity must be considered from the very first moment and even precede being power calculation implementation so if you get your get integrity requirements upfront evaluated from the config spec of your system up to the way you are ready to build the validations that's the best way to go in terms of implementation of calculations right always have the integrity from the get go so understand on paper first where and how do you want to get your final calculated results so understanding what a formula does and what are the results i'm going to get from there on paper first planning is the best way to figure out how you want to test that and what are your final results so on understand that on paper first plan first before building the calculations measure the effort versus the benefits of including certain calculations and empower in comparison with external systems not only in terms of technical challenges but also from a business perspective so coming back again to that example of having a pass and fail custom field on empower that's doable and fairly easy to do but the problem is empower is not even though it has some choose to do this kind of evaluation it's not meant for purpose right it's best designed for numeric calculations and even though it's easy to do a passing field custom field imagine having to build multiple passing fail formulas for all your evaluations like uh resolution or use usb tailing or your percent over label claim percent agreement it can potentially double the number of custom fields you're creating for a specific test method so that consumes times of development validation and so on and so on whereas you you anyways you will have to address those specific evaluations on an external system anyways because you have pitch meters in your lab you have balances you have nmr you have gcs you have a lot of other systems that might not be on in power so you will have to have a separate system anyways so maybe the effort of building those calculations within power you have to measure that if that's really too much beneficial in terms of what you get from that after that right and last but not least once implemented it is a live system in a regulated environment it must be treated as such so once you build calculations you test them you validate you create your validation documentation and you're good to go if changes happen to your test methods you also have to take into account the duration the calculations you built so you don't end up with a method that altered for some reason and then you have formulas that are giving you results that are not fit for purpose anymore and you might have issues over that right so with that said there are additional materials on the water on the the asterisk website regarding chewing power such as case studies related to benefits brought by using power custom fields in a regulating environment to check these and other great case studies blogs blogs and articles you can go to aztecsync.com and you can also visit our youtube youtube channel to check the webinars from preview previous months let's say you were invited for a webinar but for some reason we were able to watch that you can go to the our youtube channel and look into that including there's a date integrity assessment webinar as well so if you go to youtube on asterix technology group webinars you can subscribe to our channel and you're gonna have access to all the previous presentations as well so that's this is the place where you're gonna receive the link on next week for this record as well so if you want to check the past webinars they're here for you right now all right and with that i'll leave it up to kevin thank you i'm sorry about that i was on mute there a second so i wanted to go ahead and dig into the question a little bit but first of all thank you obviously for a great presentation um and again thank you everybody who joined and attended i do want to encourage you all if you haven't to please ask questions um you know we're going to you know let the system kind of sit here for a minute or two while we uh while we hopefully gather some questions from some folks and again mention that we're going to go ahead and you know produce a recording of this i'm going to

Show moreFrequently asked questions

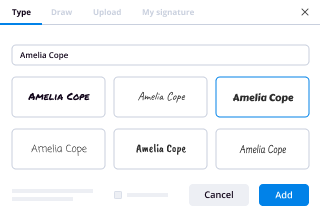

How do I add an electronic signature to a PDF in Google Chrome?

How do I add an electronic signature to my document?

How can I copy and paste an electronic signature to a PDF?

Get more for assure validated field with airSlate SignNow

- Finger initial

- Prove electronically signed Revocable Living Trust

- Endorse digisign WordPress Development Proposal Template

- Authorize electronically sign Small Business Partnership Agreement Template

- Anneal mark Performance Review Self-Assessment Template

- Justify esign Graphic Design Quote

- Try countersign Late Rent Notice

- Add Consulting Agreement eSignature

- Send Entertainment Contract Template autograph

- Fax Letter of Recommendation for Student digital sign

- Seal Software Development Progress Report signed electronically

- Password Exclusive Distribution Agreement Template electronically sign

- Pass Horse Bill of Sale countersignature

- Renew Nanny Contract mark

- Test Restaurant Receipt signed

- Require Compromise Agreement Template digi-sign

- Print awardee electronically signing

- Champion company sign

- Call for vacationer countersign

- Void HIPAA Business Associate Agreement template digisign

- Adopt Labor Agreement template electronic signature

- Vouch Exit Ticket template signed electronically

- Establish Restaurant Gift Certificate template sign

- Clear Catering Contract Template template electronically signing

- Complete Customer Product Setup Order template mark

- Force Equipment Purchase Proposal Template template eSign

- Permit Corporate Resolution Form template eSignature

- Customize Demand For Payment Letter template autograph