Test Validated Field with airSlate SignNow

Do more online with a globally-trusted eSignature platform

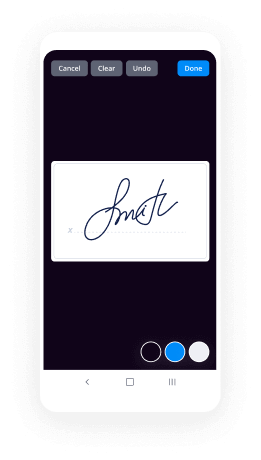

Outstanding signing experience

Trusted reporting and analytics

Mobile eSigning in person and remotely

Industry regulations and conformity

Test validated field, faster than ever before

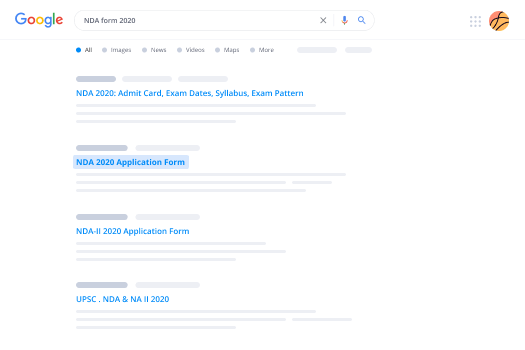

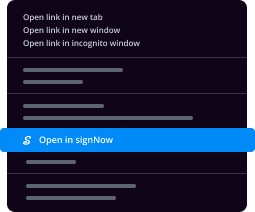

Handy eSignature extensions

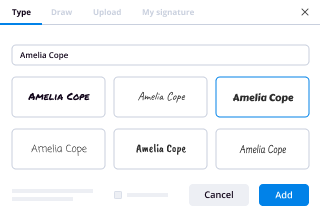

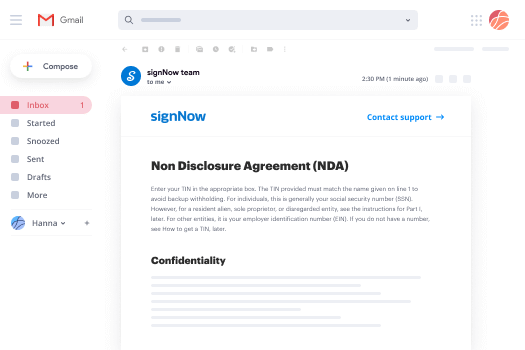

See airSlate SignNow eSignatures in action

airSlate SignNow solutions for better efficiency

Our user reviews speak for themselves

Why choose airSlate SignNow

-

Free 7-day trial. Choose the plan you need and try it risk-free.

-

Honest pricing for full-featured plans. airSlate SignNow offers subscription plans with no overages or hidden fees at renewal.

-

Enterprise-grade security. airSlate SignNow helps you comply with global security standards.

Your step-by-step guide — test validated field

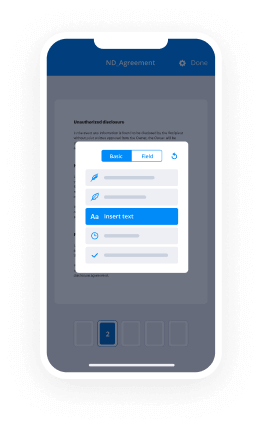

Using airSlate SignNow’s eSignature any company can accelerate signature workflows and eSign in real-time, delivering a better experience to customers and workers. test validated field in a couple of easy steps. Our mobile-first apps make working on the move feasible, even while off-line! eSign contracts from any place worldwide and close up deals faster.

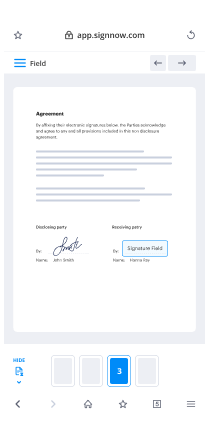

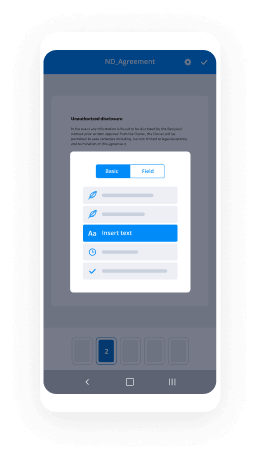

Keep to the walk-through instruction to test validated field:

- Log on to your airSlate SignNow profile.

- Find your document in your folders or import a new one.

- the record and edit content using the Tools menu.

- Place fillable fields, add textual content and eSign it.

- Include numerous signees by emails configure the signing sequence.

- Indicate which recipients will receive an executed version.

- Use Advanced Options to restrict access to the template add an expiration date.

- Press Save and Close when done.

Furthermore, there are more enhanced features open to test validated field. Add users to your common workspace, view teams, and monitor cooperation. Numerous consumers across the US and Europe concur that a solution that brings everything together in a single holistic enviroment, is what businesses need to keep workflows functioning effortlessly. The airSlate SignNow REST API enables you to embed eSignatures into your application, website, CRM or cloud. Try out airSlate SignNow and enjoy faster, easier and overall more productive eSignature workflows!

How it works

airSlate SignNow features that users love

See exceptional results test validated field with airSlate SignNow

Get legally-binding signatures now!

FAQs

-

What is field validation in testing?

Field Validation Table (FVT) is a test design technique, which mainly helps for validating fields present in the application. ... Using this field validation table, a tester can add value to their work and contribute towards the delivery of quality software by identifying even a small defect in any field of an application. -

What is field level validation?

Field- level keyboard events allow you to immediately validate user input : KeyDown, KeyPress, KeyUp. Form-level validation is the process of validating all fields on a form at once. it is usually called when the user is ready to proceed to another step. -

What do you mean by validation control?

Define Validation Control in ASP.NET. - The validation control is used to implement page level validity of data entered in the server controls. ... - If the data does not pass validation, it will display an error message to the user. - It is an important part of any web application. -

What is validation in software testing with example?

Validation is the process of evaluating the final product to check whether the software meets the business needs. In simple words, the test execution which we do in our day to day life is actually the validation activity which includes smoke testing, functional testing, regression testing, systems testing, etc. -

How do you validate a test?

Validation Planning \u2013 To plan all the activities that need to be included while testing. Define Requirements \u2013 To set goals and define the requirements for testing. Selecting a Team \u2013 To select a skilled and knowledgeable development team (the third party included). -

What is a validated measurement tool?

When a test or measurement is "validated," it simply means that the researcher has come to the opinion that the instrument measures what it was designed to measure. ... Repeated use of the instrument is a strong indication that the instrument was designed to measure what it set out to measure. -

How do you ensure validity of a test?

Make sure your goals and objectives are clearly defined and operationalized. ... Match your assessment measure to your goals and objectives. ... Get students involved; have the students look over the assessment for troublesome wording, or other difficulties. -

What is product validation testing?

Product Validation Testing. ... This validates that using the production equipment, tooling, and material provides a product that meets your design specification and durability targets. -

How do you test for reliability?

Examples of appropriate tests include questionnaires and psychometric tests. It measures the stability of a test over time. A typical assessment would involve giving participants the same test on two separate occasions. If the same or similar results are obtained then external reliability is established. -

What is verification and validation with example?

Verification uses methods like inspections, reviews, walkthroughs, and Desk-checking etc. 4. Validation uses methods like black box (functional) testing, gray box testing, and white box (structural) testing etc. 5. Verification is to check whether the software conforms to specifications. -

How do you know if an experiment is reliable?

A measurement is reliable if you repeat it and get the same or a similar answer over and over again, and an experiment is reliable if it gives the same result when you repeat the entire experiment. -

How do you test a text field?

If the text field should receive only numbers, try to write any other character. Do not enter any syntax as input (the submit button shouldn't be allowed). Try to Add more characters than the MAX allowed number of characters. Validate the text field with both lowercase and uppercase alphabets. -

How do I check a box in Word?

Just position your cursor in the document where you want a check box, switch to the \u201cDeveloper\u201d tab, and then click the \u201cCheck Box Content Control\u201d button. You should see a check box appear wherever you placed your cursor. -

How do I spell check a text box in Word?

To spell check a text box, I must insert the cursor in the text box then hit spell check to perform this function. Guessing: Save it as a text file. Open in Word, see if you can then check those particular locations. -

How do you write a test case if you forgot the password?

1.To check whether when we select the forgot password link it is directing to forgot password link page. 2.To check whether in that page it must ask for alternative email id to send the link. 3.To check whether the link has sent to the mail to which the user has provided. 4.To check whether the link can be used once once.

What active users are saying — test validated field

Related searches to test validated field with airSlate airSlate SignNow

Test validated field

[Music] in this video we'll be discussing the different data sets used during training and testing and neural network for training and testing purposes for our model we should have our data broken down into three distinct data sets these data sets will consist of a training set a validation set and a test set let's start by discussing the training set the training set is what it sounds like it's the set of data used to train the model during each epoch our model will be trained over and over again on this same data in our training set and it will continue to learn about the features of this data the hope with this is that later we can deploy our model and have it accurately predict on new data that it's never seen before it'll be making these predictions based on what it learned about the training data okay now let's discuss the validation set the validation set is a set of data separate from the training set that is used to validate our model during training this validation process helps give information that may assist us with adjusting our hyper parameters so recall how we just mentioned that with each epoch during training the model will be trained on the data in the training set well it will also be simultaneously validating on the data in the validation set now we know from our previous videos on training that during the training process the model will be classifying the output for each input in the training set after this classification occurs the loss will then be calculated and the weights in the model will be adjusted then during the next epoch it will classify the same input again now also during training the model will be classifying each input from the validation set as well it will be doing this classification based only on what it's learned about the data it's being trained on in the training set the weights won't be updated in the model based on the loss calculated from our validation data remember the data and the validation set is separate from the data in the training set so when it's validating on this data this data does not consist of samples that the model is already familiar with from training one of the major reasons we need a validation set is to ensure that our model is not overfitting to the data in the training set we'll discuss overfitting and underfitting in detail at a later time but the idea of overfitting is that our model becomes really good at being able to classify the data in the training set it's unable to generalize and make accurate classifications on data that it wasn't trained on so during training if we're also validating the model on our validation set and see that the results it's giving for the validation set are just as good as the results it's giving for the training data then we can be more confident that our model is not overfitting on the other hand if the results on the training data are really good but the results on the validation data are lagging behind then our model is likely overfitting now let's move on to the test set the test set is a set of data that's used to test the model after the model has already been trained this test set is separate from both the training set and validation set after our model has been trained and validated using our training and validation sets we'll then use our model to predict the output of the data in the test set now one major difference between the test set and the other two sets that we've discussed is that the test set should not be labeled the training set and validation set has to be labeled so that we can see the metrics given during trainings like the loss in the accuracy from each epoch so when the model is predicting on unlabeled data in our test set this would be the same type of process that would be used if we were to deploy our model into the field for example if in practice we're using a model to classify data without knowing what the labels of the data are beforehand or would never have being shown the exact date it's going to be classifying then of course we wouldn't be giving our model the labeled data to do this the entire goal of having a model be able to classify is to do this without knowing what the data is beforehand now hopefully we have an idea about how our data should be organized let's take a look now at how to structure our data for training our model with Kerris in a previous video we trained at this model shown here using the Charis fit function now I'm just going to copy this fit function down to a clean space in our notebook now whenever we fit the model I said in a previous video that we are passing the train Sables and train labels as the first two parameters to the fit function now these train samples are our entire training set and caris is expecting this training set to be in a format of either a numpy array or a list of dump I erase so I'm just going to print out this training set here so that you can see that it's just simply numpy right here now the training labels Kerris is expecting those to be in the format of a number array so again we'll just print this out here as well I'm not going to focus on this underlying data since we're just illustrating the concept here as mentioned when we use this data before I will pop up the video on the screen so that you can see in another video what this data actually is and how we pre processed it for this training our training set and our training labels there are the first two parameters passed to the fit function whenever we're training our model now what about our validation set well you don't even have to explicitly make a validation set you can pass in the parameter validation split and give it a fraction that instructs Kharis to split out this fraction of data from your training set and use it as your validation set I just arbitrarily chose 20% here so it's going to go into my training samples extract 20% out from training and use it only for validation so now if we run this fit function recall before that we only had the loss and accuracy metrics now since we added a validation set we also have the validation loss and validation accuracy metrics as well now one other thing to point out is for our validation set we're kind of implicitly creating this validation set here using the validation split parameter but we could also create an explicit validation set that is separate from the training set altogether and then passed that entire set into our model as well and to do that we wouldn't be using this validation split parameter instead if we scroll down we see we would be using this validation data parameter instead and passing in the validation set explicitly here now I don't actually have the validation set created but this is the format that Kerris would expect it would expect a list of tuples and each tuple would be the actual sample the actual data point and the label so here I've just illustrated that within this list we have a sample and a label and then another sample and another label and that would make up the entire list okay so those are our two options that we have to work with for our validation set with Kerris now the test set would be structured just like the training set is and we'd be using the test set when we call the predict function on our model since we haven't yet covered the concept of predicting all saves showing how to do that with Kerris until we cover the concept in another video so hopefully now you have an idea about what the different data sets are for training and testing and neural network and how you can make use of them in Carris and I hope you found this video helpful if you did please like the video subscribe suggest and comment and thanks for watching [Music]

Show more